- Home

- PowerShell Community

- Windows PowerShell

- connect to mysql using reusable functions

connect to mysql using reusable functions

- Subscribe to RSS Feed

- Mark Discussion as New

- Mark Discussion as Read

- Pin this Discussion for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jun 02 2022 12:18 AM - edited Jun 02 2022 12:34 AM

Hi,

I have a script built this way:

function runquery {

param1

connect to mysql

run a query with param1

disconnect

}

Main script:

foreach blabla{

$something = runquery $blabla1

...tasks...

}

I use the runquery function in a foreach and work with the retrieved values (main script).

The problem is that doing this I keep connecting and disconneting to the DB.

I'd like to change this behavior to:

function connecttomysql{}

function runquery{}

function disconnectfrommysql{}

Main script

connecttomysql

foreach blabla{

$something = runquery $blabla1

...task...

}

disconnectfrommysql

This way looks nicier to me (your thoughts?). Though I can't make it work so far.

I must specify that I DO want to run "myquery $pcname" for each pcname.

I don't want to do something like loading the complete "PC table" at once in memory and then work with it.

Said an other way, I don't want to replace:

"SELECT * FROM wakeonlan WHERE hostname = '" + $pc_name + "';"

with

"SELECT * FROM wakeonlan"';"

Thank you.

Here is the code.

$scriptfolder = $PSScriptRoot

function ConnectToMySql{

# http://woshub.com/run-mysql-queries-from-powershell/#:~:text=Run%20MySQL%20Queries%20from%20PowerShell%20One%20of%20the,tables%20to%20access%20data%20directly%20from%20PowerShell%20console.

$mysql = "myserver"

$DB = "mydb"

$user ="myuser"

$pass ="mypass"

# Load MySQL .NET Connector Objects

[void][system.reflection.Assembly]::LoadWithPartialName("MySql.Data")

# Open Connection

$Connection = [MySql.Data.MySqlClient.MySqlConnection]@{ConnectionString="server=$MySQL;uid=$user;pwd=$pass;database=$DB"}

$Connection.Open()

}

function RunQuery{

param(

[Parameter (Mandatory = $true)] [string] $pc_name

)

$MYSQLCommand = New-Object MySql.Data.MySqlClient.MySqlCommand

$MYSQLDataAdapter = New-Object MySql.Data.MySqlClient.MySqlDataAdapter

$MYSQLDataSet = New-Object System.Data.DataSet

$MYSQLCommand.Connection=$Connection

$MYSQLCommand.CommandText="SELECT * FROM wakeonlan WHERE hostname = '" + $pc_name + "';"

$MYSQLDataAdapter.SelectCommand=$MYSQLCommand

$NumberOfDataSets=$MYSQLDataAdapter.Fill($MYSQLDataSet, "data")

#$MYSQLDataSet.tables[0].id

$pcinf = [PSCustomObject] @{

hostname = $MYSQLDataSet.tables[0].hostname

macadd = $MYSQLDataSet.tables[0].macadd

dbrcast = $MYSQLDataSet.tables[0].dbrcast

mask = $MYSQLDataSet.tables[0].mask

}

return $pcinf

}

function DisconnectMysql{

$Connection.Close()

}

#loading computers

$RemoteOfficeComputers = import-csv -Path "$scriptfolder\teletravail-vdi_prod.csv" -Delimiter ";" -Header "Domain", "Computer", "User"

#loading existing IP route

$IPRouteInformation = import-csv -Path "$scriptfolder\VCS_WOL_IPRoute2.ini" -Delimiter ";"

#move INI file values from array to hash

$NewIPRouteInformation=$null

$NewIPRouteInformation=@{}

foreach($record in $IPRouteInformation)

{

$NewIPRouteInformation[$record.hostname]=[ordered]@{

TimeID = $record.TimeID

Hostname = $record.hostname

macadd = $record.macadd

dbrcast = $record.dbrcast

mask = $record.mask }

}

#connecting to the DB

ConnectToMySql

#Checking each PC from CSV file

foreach($PC in $RemoteOfficeComputers.computer){

$TimeID = date -Format yyyyMMddhhmmssfff

#Getting PC's information from RT's DB

#Running the query many times with the needed parameter

$pcinfo = runquery -pc_name $PC

write-host "Working on pcinfo.hostname = $($pcinfo.hostname)"

#Verify if we find the PC in the ini file

if ($pcinfo.hostname -eq $NewIPRouteInformation[$pcinfo.hostname].hostname){

write-host "Both hostnames match $($pcinfo.hostname) = $($NewIPRouteInformation[$pcinfo.hostname].hostname)"

#If the PC name is found, check if the Domain Broadcast has changed, if yes update and change TimeStamp

if($pcinfo.dbrcast -ne $NewIPRouteInformation[$pcinfo.hostname].dbrcast) {

$NewIPRouteInformation[$pcinfo.hostname].dbrcast = $pcinfo.dbrcast

$NewIPRouteInformation[$pcinfo.hostname].TimeID = $TimeID

}

write-host "Done for $($pcinfo.hostname)"

}else{ #If hostname was not found, create the record in ini File

write-host "NO hostnames match $($pcinfo.hostname) = $($NewIPRouteInformation[$pcinfo.hostname].hostname)"

$NewIPRouteInformation[$pcinfo.hostname]=[ordered]@{

TimeID = $TimeID

Hostname = $pcinfo.hostname

macadd = $pcinfo.macadd

dbrcast = $pcinfo.dbrcast

mask = $pcinfo.mask }

write-host "Done for $($pcinfo.hostname)"

#write-host "showing hash value :"$NewIPRouteInformation[$pcinfo.hostname]

}

} #end foreach PC

#use variable before ? Test execution time

#Removing records older than 365 days

$NewIPRouteInformation.GetEnumerator() | ? { $_.Value.TimeID -lt (date (get-date).AddDays(-365) -Format yyyyMMddhhmmssfff) } | %{$NewIPRouteInformation.Remove($_)}

#sort by timeID and then export to CSV

$NewIPRouteInformation.GetEnumerator() | Sort-Object $_.keys | ForEach-Object{[PSCustomObject]$_.value } | Sort-Object TimeID -Descending | Export-Csv "$scriptfolder\VCS_WOL_IPRoute2.ini" -NoTypeInformation -delimiter ";"

DisconnectMysql

- Labels:

-

Functions

-

MySQL

-

PowerShell

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jun 02 2022 01:44 AM

Hi, John.

I'm going to generalise here, as while I'm familiar with the SQL Server and Oracle libraries, I'm unfamiliar with MySQL. Even so, the concepts I'll speak to are the same.

Issue #1: Scope

The $Connection variables used in the ConnectToMySql() and RunQuery() functions are not the same. This is because each version only means something inside the function it's defined in.

The second those functions exit, there is no such variable as $Connection. So, opening a connection in one function and assigning it to a local variable within that function does not result in a usable variable in a different function.

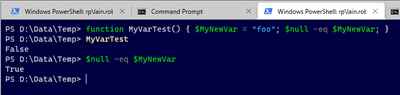

Here's a very basic visual example.

What this is showing you is that that the variable defined within the function ($MyNewVar) does indeed exist within that function. However, when I look to see if it exists after exiting that function, it does not (i.e. it is $null.)

The most prudent way to fix this is:

- Define your $Connection variable in the main script body;

- Refer to that variable from within your functions by utilising the "$global:" prefix.

Technically, once you define it as a global variable, you can get away with not needing to use the "$global:" prefix, however, it is better practice to explicitly include it, as doing so make the code easier to understand and avoids conflicts where you have defined a global and local variable that share the same name.

Here's a skeleton code example demonstrating the "$global:" prefix:

#region Function definitions.

function ConnectToMySql()

{

$mysql = "myserver";

$DB = "mydb";

$user = "myuser";

$pass = "mypass";

# Load MySQL .NET Connector Objects

[void][system.reflection.Assembly]::LoadWithPartialName("MySql.Data");

# Open Connection

$global:Connection = [MySql.Data.MySqlClient.MySqlConnection]@{ConnectionString="server=$MySQL;uid=$user;pwd=$pass;database=$DB"};

$global:Connection.Open();

}

function RunQuery()

{

# blah

# blah

$MYSQLCommand.Connection=$global:Connection;

# blah

}

#endregion

#region Main script body.

# Define glohbal variables.

$Connection;

#endregion

Issue #2: Closing and disposing

When you're working with .NET type libraries, you need to ensure you're following .NET procedures such as cleaning up after you've finished with an object. Currently, you're not doing that, meaning memory consumption will grow.

In smaller projects that may not be visible but when you start iterating through tens or hundreds of thousands, this can result in PowerShell easily growing into high hundreds if not gigabytes of memory used territory.

For your script, where this will matter the most is in the RunQuery() function, where you're creating brand new instances for three classes, yet not calling Dispose() or Close() (where applicable - not all classes have a Close().)

Once a variable falls out of scope, more often than not the garbage collector can reclaim that memory. However, it is best practice to explicitly call .Close() and .Dispose() where the class has those methods.

Here's some skeleton code specific to the RunQuery() function showing the .Dispose() calls:

function RunQuery()

{

$MYSQLCommand = New-Object MySql.Data.MySqlClient.MySqlCommand;

$MYSQLDataAdapter = New-Object MySql.Data.MySqlClient.MySqlDataAdapter;

$MYSQLDataSet = New-Object System.Data.DataSet;

# blah

# blah

$pcinf = [PSCustomObject] @{

# blah

# blah

}

$MYSQLDataAdapter.Dispose();

$MYSQLCommand.Dispose();

return $pcinf;

}

Optional optimisation:

In addition to the $Connection variable being defined globally (meaning it's only defined once), you should also consider defining a $Command variable globally and simply referencing it within the functions using the "$global:" prefix.

This way, you are not building up a new instance and testing it down again every single time you call RunQuery().

This isn't required - it's only a suggestion, but these smaller optimisations can collectively help your script scale upwards far more than if you choose not to.

Lastly, while MySql isn't my area - as I stated before, there's some good reference documentation on the MySqlClient here that demonstrates things like closing and disposing, etc.

MySql.Data.MySqlClient Namespace

Cheers,

Lain

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jun 08 2022 06:19 AM

Although I'm not sure I understood your last point :) (defining a $Command variable globally)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jun 08 2022 06:50 AM

No problem!

I just meant defining $Command using the $global prefix, so it'd look like:

$global:Command

That would allow you to use it anywhere within the script, including any functions. It just means - like the $global:Connection example - that you only have to create object once rather than every time you call a function.

So, that'd be two global variables:

- $global:Connection

- $global:Command

That would allow you to create them once but use them many times, which makes your script that little bit faster/efficient (particularly over larger data sets.)

Cheers,

Lain

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jun 08 2022 06:56 AM - edited Jun 08 2022 07:04 AM

Do you mean this section?

$MYSQLCommand = New-Object MySql.Data.MySqlClient.MySqlCommand

$MYSQLDataAdapter = New-Object MySql.Data.MySqlClient.MySqlDataAdapter

$MYSQLDataSet = New-Object System.Data.DataSet

I would take it out of the Runquery() function and I define it in the main screen then? (adding $global: in front of each lines)

Doing so these lines are read only once instead of every Runquery calls. I am right?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jun 08 2022 04:34 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jun 09 2022 01:00 AM - edited Jun 22 2022 05:50 AM

Thank you Lain for your help,

Please excuse this "off-topic" question that might pollute the thread :

In this script my source CSV has about 4000 records, so I will run my query 4000 times.

My script duration is 1 min, which is very ok to me.

But speaking about best pratices, would you recommand that I load the whole table content once in a variable and then search in that variable for my records? Instead of running my query everytime for each record.

I suppose it depends on the size of the table of course, but generaly speaking, what do you think is best?

thanks