- Home

- Azure

- Azure Storage Blog

- HPC Cache Automation with Terraform

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

HPC Cache Automation with Terraform

Allocating HPC infrastructure costs time and money, so whatever you can do to automate the process saves both. Nowhere are such savings more essential to corporate success than in the semiconductor industry where businesses face non-stop competitive pressure to accelerate production of more complex designs in ever-smaller geometries.

Silicon design teams using HPC infrastructure for verification and other EDA workloads are likely already using or at least familiar with Hashicorp’s Terraform automation tool. We recently shared information about using Terraform Infrastructure as Code (IaC) to automate render cloud infrastructure deployments. In that article, we provided specific examples for deploying the Avere vFXT for Azure high-performance caching service.

Today we’d like to expand on that to show how you can use Terraform to deploy and manage Azure HPC Cache resources. With this new support for HPC Cache, Terraform makes it even easier to set up file caching for HPC workloads running in Azure.

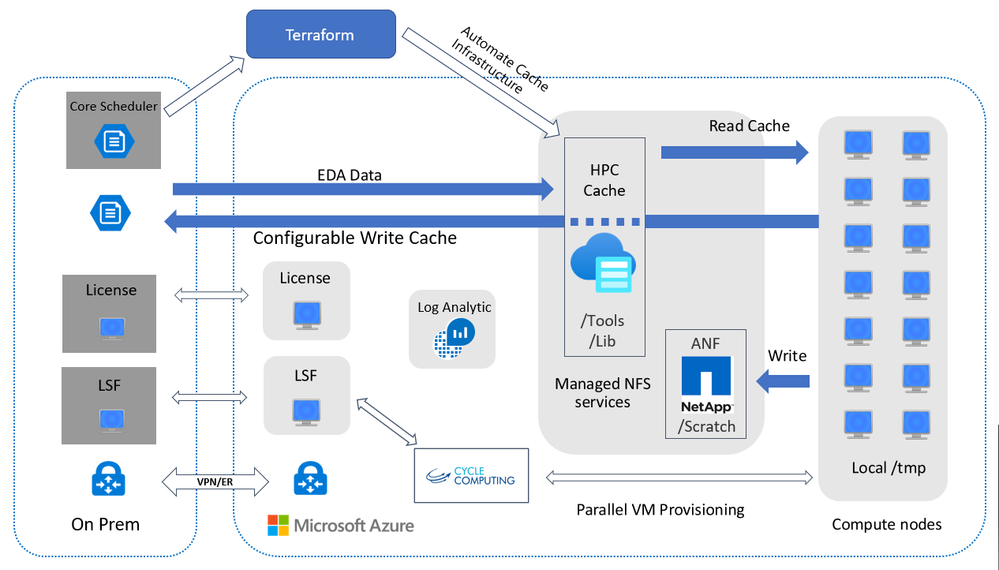

HPC Cache reduces latency between Azure and on-prem storage so you can burst EDA workloads to Azure without moving your datasets. Similar in functionality to Avere vFXT, the Azure HPC Cache service offers easier data access and simpler management via the Azure Portal and API tools. EDA workloads can benefit from the cache’s performant data access, while IT and design/test engineers will appreciate the time savings, increased agility, cost control, reduced manual errors, and decreased risk benefits of using Terraform’s single-touch, programmatic setup.

Figure 1. Using Terraform to deploy HPC Cache resources for EDA verification workloads

Essentially a template to create Azure HPC Cache configurations for design-verification and other EDA workloads, the Terraform tool builds out the infrastructure with just a few commands. Terraform makes it easy to codify both setup and tear-down processes. Learn about specific parameters of the resource on the Terraform HPC Cache documentation page.

Note: While many enterprises use Azure Resource Manager (ARM) templates for IaC services, IT administrators that choose the Terraform tool have typically already adopted Terraform for their on-premises infrastructure provisioning. Since ARM templates do not work cross platform (for both on-prem and cloud infrastructure), the Terraform application is the better option for these users. By giving users a single tool to learn and use across multiple platforms, Terraform continues to rapidly expand its footprint among Azure enterprise customers.

Terraform Examples to Deploy Azure HPC Cache

To help you get started, we’ve created a set of examples that show you how to deploy a number of Azure HPC Cache and NFS filer configurations. All examples can be deployed through Azure Cloud Shell. (Terraform is installed by default.) The process follows these four basic steps:

- Start Azure Shell in browser tab (https://shell.azure.com)

- Set your subscription

- Create your Terraform file from direct editing or by pulling it from a git repository

- Run through the process of:

- Terraform Init that pulls in the providers (Azure Provider) and any modules

- Terraform Apply that applies the configuration to production

We currently offer seven HPC Cache examples (available at https://github.com/Azure/Avere/tree/master/src/terraform)

- no-filer example

- HPC Cache mounting Azure Blob Storage cloud core filer example

- HPC Cache mounting 1 IaaS NAS filer example

- HPC Cache mounting 3 IaaS NAS filers example

- HPC Cache mounting an Azure Netapp Volume

- HPC Cache and VDBench example

- HPC Cache and VMSS example

Vdbench Example

To give you an idea of sample configuration content, let’s look at the vdbench example that would be used to measure HPC Cache performance. This basic setup generates small and medium-sized workloads to test the performance of Azure HPC Cache memory and disk subsystems. The suggested configuration is 12 x Standard_D2s_v3 clients for each 2 GB/s of throughput capacity in an HPC Cache deployment.

Deployment Instructions

To run the example, execute the following instructions. This assumes use of Azure Cloud Shell. If you are installing into your own environment, you will need to follow the instructions to set up Terraform for the Azure environment.

Before starting, download the latest vdbench from https://www.oracle.com/technetwork/server-storage/vdbench-downloads-1901681.html. To download you will need to create an account with Oracle and accept the license. Upload to a storage account or something similar where you can create a personal downloadable .

- Browse to https://shell.azure.com

- Specify your subscription by running this command with your subscription ID: az account set --subscription YOUR_SUBSCRIPTION_ID. You will need to run this every time after restarting your shell, otherwise it may default you to the wrong subscription, and you will see an error similar to azurerm_public_ip.vm is empty tuple.

- Double check your HPC Cache prerequisites

- Get the terraform examples

mkdir tf

cd tf

git init

git remote add origin -f https://github.com/Azure/Avere.git

git config core.sparsecheckout true

echo "src/terraform/*" >> .git/info/sparse-checkout

git pull origin master

- Decide to use either the NFS filer or Azure storage blob test and cd to the directory:

- for Azure Storage Blob testing:

cd src/terraform/examples/HPC\ Cache/vdbench/azureblobfiler

- for NFS filer testing:

cd src/terraform/examples/HPC\ Cache/vdbench/nfsfiler

- Type code main.tf to edit the local variables section at the top of the file, to customize to your preferences

- Execute terraform init in the directory of main.tf.

- Execute terraform apply -auto-approve to build the HPC Cache cluster with a 12 node VMSS configured to run VDBench.

Using vdbench

- After deployment is complete, log in to the jumpbox as specified by the jumpbox_username and jumpbox_address terraform output variables, and create the ssh key to be used by vdbench on the jumpbox:

touch ~/.ssh/id_rsa

chmod 600 ~/.ssh/id_rsa

vi ~/.ssh/id_rsa

- Run az login and execute the command from the vmss_addresses_command terraform output variable to get one ip address of a VMSS node, and run the following commands to copy the id_rsa file, and login to the node, replace USERNAME with the jumpbox username and IP_ADDRESS with the ip address of a VMSS node:

scp ~/.ssh/id_rsa USERNAME@IP_ADDRESS:.ssh/.

ssh USERNAME@IP_ADDRESS

- During installation, copy_dirsa.sh was installed to ~/. on the vdbench client machine, to enable easy copying of your private key to all vdbench clients. Run ~/copy_idrsa.sh to copy your private key to all vdbench clients, and to add all clients to the "known hosts" list. (Note: If your ssh key requires a passphrase, some extra steps are needed to make this work. Consider creating a key that does not require a passphrase for ease of use.)

Memory test

- To run the memory test (approximately 20 minutes), issue the following command:

cd

./run_vdbench.sh inmem.conf uniquestring1

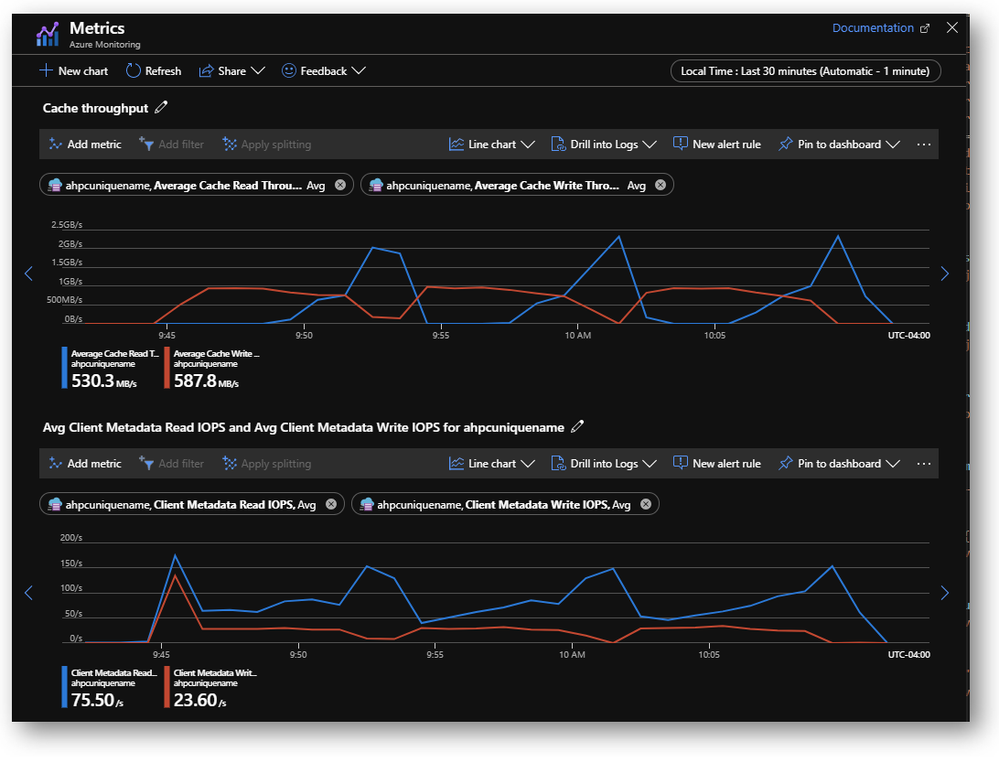

- Browse to the Azure HPC Cache resource in the portal to watch the performance metrics. You will see a similar performance chart to the following:

On-disk test

- To run the on-disk test (approximately 40 minutes) issue the following command:

cd

./run_vdbench.sh ondisk.conf uniquestring2

- Browse to the Azure HPC Cache resource in the portal to watch the performance metrics. You will see a performance chart similar to the following one:

Resources and references

The links below can help you get started using Terraform to provision and manage Azure HPC Cache resources. If you need additional information, visit our github page at https://github.com/Azure/Avere or message our team through the tech community at:

https://techcommunity.microsoft.com/t5/user/viewprofilepage/user-id/602351

https://docs.microsoft.com/en-us/azure/terraform/terraform-overview

https://www.terraform.io/docs/providers/index.html

https://github.com/Azure/Avere/tree/master/src/terraform

https://github.com/Azure/Avere/tree/master/src/terraform/examples/HPC%20Cache/vdbench

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.