- Home

- Azure

- Azure Observability

- Not able to get logs related to azure data factory mapping data flows from log analytics

Not able to get logs related to azure data factory mapping data flows from log analytics

- Subscribe to RSS Feed

- Mark Discussion as New

- Mark Discussion as Read

- Pin this Discussion for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

Jul 23 2020

09:23 AM

- last edited on

Apr 08 2022

10:34 AM

by

TechCommunityAP

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jul 23 2020

09:23 AM

- last edited on

Apr 08 2022

10:34 AM

by

TechCommunityAP

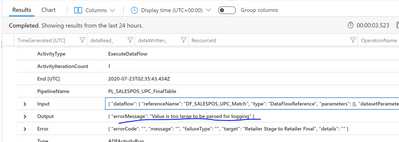

We are working on implementing a custom logging solution. Most of the information what we need is already present in log analytics from data factory analytics solution but for getting log info on data flows, there is a challenge. When querying, we get this error in output. "Too large to parse".

Since data flows are complex and critical piece in a pipeline, we are in desperate need to get data like rows copied, skipped, read etc of each activities with in data flow. can you pls help how to get those info?

@IGaurav @scott Not sure if this mention refers to Gaurav malhotra of data factory team.

- Labels:

-

Azure Monitor

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 25 2020 08:53 AM

@Bharani365 A fix was recently deployed to reduce the impact of this error. Can you try again and let us know if problem persists? Furthermore, if your dataflow is too complex or using too many partitions, you can simplify your dataflow to further improve this situation. If you could share a run id, we could analyze it and have a better understanding of the issue.