~ Mike Jacquet

These appear to be related problems, but in reality, there are several underlying causes that need to be explored and DPM settings explained / configured before optimal tape usage can be achieved.

Tape drive / Library hardware and device drivers.

Data Protection Manager does not ship with or use Microsoft proprietary device drivers for tape drives and libraries; instead, DPM relies on Windows 2008 X64 compatible device drivers from the OEM vendor of the hardware. The guidance we are giving customers is if the library is listed on the Windows server catalog under the hardware / storage section and shows as being compatible with Windows 2008 X64 and Windows 2008 R2, then it should work fine with Data Protection Manager.

Windows Server Catalog: http://www.windowsservercatalog.com

One problem that the DPM product group discovered is that some tape drives did not properly report or process end of media EOM correctly when the end of tape was reached and instead reported an IO_DEVICE_ERROR 0x8007045D error. This problem would cause DPM tape backup jobs to fail anytime a tape filled. Another problem that was discovered is some tape drives did not handle multiple buffers very well and would also result in IO errors being reported. To mitigate both of those problems, some logic was added to the DPM agent to handle these types of problems that were outside of our control.

Today, If the tape driver returns an IO_DEVICE_ERROR, DPM will auto convert IO_DEVICE_ERROR to an END_OF_TAPE_REACHED and span to next media without any issues. However, that brings us to our first reported problem that DPM will not fill tapes and grabs another tape after only writing a few gigabytes.

Now you ask, how can I tell if the tape drive / driver / firmware combination in use is having these behind the scenes / hidden device I/O error 0x8007045D ?

To see if the tape drive is reporting IO error 0x8007045D that equals "The request could not be performed because of an I/O device error", you can run the following commands on the DPM server.

- Open an Administrative command prompt.

- CD C:\Program file\Microsoft DPM\DPM\Temp

- Find /I "0x8007045D" MSDPM*.Errlog >C:\temp\MSDPM0x8007045D.TXT

- Notepad C:\temp\MSDPM0x8007045D.TXT

- See if there are any entries in the file, if not look in the DPMRA logs

- Find /I "0x8007045D" DPMRA*.Errlog >C:\temp\DPMRA0x8007045D.TXT

- Notepad C:\temp\DPMRA0x8007045D.TXT

Also search for "-2147023779" which is the decimal equivalent.

NOTE The DPMLA*.errlog may contain that 0x8007045D errors and that is OK, so do not look in that file.

How do I fix this I/O 0x8007045D error problem?

1. If your tape library has a feature to auto-clean a dirty tape drive, you must disable that feature. DPM does not support auto-clean feature being enabled during normal operation.

Example: Uncheck the option in your library management software or library control panel.

Consider the following…

A) DPM starts and finishes some backup jobs writing possibly several small data sources on to a tape.

B) During the next backup to that same tape, the tape drive reports dirty status in the middle of a data source backup.

C) The library auto-clean feature yanks the tape away from DPM to clean the drive.

D) DPM fails the backup job because the drive no longer contains media.

D) That leaves the tape partially full of only successful recovery point created earlier and DPM then marks the tape offsite ready since we experienced an IO error 0x8007045D under the covers.

E) It now appears that DPM is not utilizing the tape to full capacity like you expect.

You can specifically configure DPM that a particular slot contains a cleaning tape, you must run a tape cleaning job manually when a drive becomes dirty.

For DPM 2010: How to Clean a Tape Drive:

http://technet.microsoft.com/en-us/library/ff399452.aspx

For DPM 2007: How to Clean a Tape Drive:

http://technet.microsoft.com/en-us/library/bb795736.aspx

2. Check with the Tape Drive / Library OEM vendor to see if there are any new firmware or driver updates available, and if so, update them to the latest revision. Check your controller settings and scsi or fiber connections including termination.

3. By Default, DPM will use 10 tape buffers when writing to the tape drive. The below BufferQueueSize registry setting will reduce the number of buffers to three. Most of the time, that is enough to reduce or eliminate the IO error and does not negatively affect tape backup performance. However, you may need to reduce it further if the value of 3 does not help.

NOTE If you need to reduce the setting below 3, it is very possible that backups will succeed without errors, however tape restores or tape library inventory jobs may hang. Should that occur, you will need to increase the BufferQueueSize entry back up to three or more to do the restore, then reduce it again for normal backups.

Copy and Save the below in notepad then save as BufferQ.REG on the DPM server.

Windows Registry Editor Version 5.00

[HKEY_LOCAL_MACHINE\SOFTWARE\Microsoft\Microsoft Data Protection Manager\Agent]

"BufferQueueSize"=dword:00000003

Right-click BufferQ.REG and choose the "merge" or "open with registry editor" option to add it to the registry. Stop and restart the DPMRA service.

Another solution that also seems to help resolve the above issue is to add the following Storport key and BusyRetryCount value to each of the tape devices.

HKEY_LOCAL_MACHINE\System\CurrentControlSet\Enum\SCSI\<DEVICEID>\<INSTANCE>\DeviceParameters\Storport\

Value - BusyRetryCount

Type - DWORD

Data - 250 Decimal or (0xFA hex)

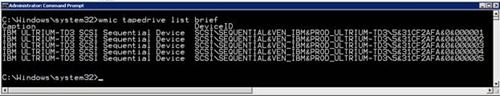

To get a list of all the tape devices in your DPM Server that needs the registry key added to, run the following command from an administrative command prompt. That will return a list of tape drive Scsi\DeviceID\Instance that you can use to make the above change.

C:\Windows\system32>

wmic tapedrive list brief

Below would be the registry keys to add to the DPM server based on the above output from wmic command.

Windows Registry Editor Version 5.00

[HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Enum\SCSI\Sequential&Ven_IBM&Prod_ULTRIUM-TD3\5&31cf2afa&0&000001\Device Parameters\StorPort]

"BusyRetryCount"=dword:000000fa

[HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Enum\SCSI\Sequential&Ven_IBM&Prod_ULTRIUM-TD3\5&31cf2afa&0&000002\Device Parameters\StorPort]

"BusyRetryCount"=dword:000000fa

[HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Enum\SCSI\Sequential&Ven_IBM&Prod_ULTRIUM-TD3\5&31cf2afa&0&000003\Device Parameters\StorPort]

"BusyRetryCount"=dword:000000fa

[HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Enum\SCSI\Sequential&Ven_IBM&Prod_ULTRIUM-TD3\5&31cf2afa&0&000004\Device Parameters\StorPort]

"BusyRetryCount"=dword:000000fa

[HKEY_LOCAL_MACHINE\SYSTEM\CurrentControlSet\Enum\SCSI\Sequential&Ven_IBM&Prod_ULTRIUM-TD3\5&31cf2afa&0&000005\Device Parameters\StorPort]

"BusyRetryCount"=dword:000000fa

Data Protection Manager 2007 / 2010 Specific Configuration Settings

Let us explore some Data Protection Manager specific configuration settings that have a large impact on how long a tape can used for backup jobs.

TAPE CO-LOCATION - This feature allows data sources from different protection groups that share the same recovery goals to be written to the same tape. This helps utilize the tapes by filling it with backups across protection groups. Only protection groups that share the exact same tape recovery goals and encryption settings can be co-located together on the same tape.

NOTE DPM 2012 has an enhanced option that allows you to choose which protection groups can be co-located regardless of retention goals.

The below LT-Goals.PS1 DPM powershell script can be ran on a DPM 2007 / 2010 server to analyze all your protection groups and list which protection groups can be co-located together based in common goals and encryption settings across protection groups.

Copy / Paste the below into notepad and save as LT-Goals.PS1 - then run it in the DPM power shell window.

cls

$confirmpreference='none'

$dpmversion = ((get-process | where {$_.name -eq "msdpm" }).fileversion)

write-host "DPM Version - " $dpmversion "`nCollecting Long Term protection Information. Please wait..." -foreground yellow

$dpmserver = (&hostname)

out-file longterm.txt

$pg = @(get-protectiongroup $dpmserver | where { $_.ProtectionMethod -like "*Long-term using tape*"})

write-host "We have" $pg.count "groups with tape protection"

foreach ($longterm in $pg)

{

"-----------------------------------------------------------`n" | out-file longterm.txt -append

"" | out-file longterm.txt -append

"Protection Group " + $longterm.friendlyname | out-file longterm.txt -append

"" | out-file longterm.txt -append

switch ($dpmversion.substring(0,1))

{

2 { $policySchedule = @(Get-PolicySchedule -ProtectionGroup $longterm -longterm)}

3 { $policySchedule = @(Get-PolicySchedule -ProtectionGroup $longterm -longterm tape)}

default { write-host "NOT TESTED ON THIS DPM VERSION. Exiting script" -foreground red;exit }

}

$tb = Get-TapeBackupOption $longterm;

"Is encryption enabled? " + $tb.OffsiteEncryption | out-file longterm.txt -append

"" | out-file longterm.txt -append

$tb.RetentionPolicy | out-file longterm.txt -append

# $tb = $tb.labelinfo

$label = @($tb.label);

$count = $policySchedule.count -1

while ( $count -ne -1)

{

if ($label[$count].length -eq 0 -or $label[$count].length -eq $null)

{

"Default Label Name" | out-file longterm.txt -append

}

else

{

"Tape Label: " + $label[$count] | out-file longterm.txt -append

}

$policyschedule[$count] | fl * | out-file longterm.txt -append

# (Get-TapeBackupOption $longterm).RetentionPolicy | out-file longterm.txt -append

$count--

}

}

#exit

if ($pg.count -gt 1)

{

$pgcount=0

while ($pgcount -ne ($pg.count-1))

{

$collocation = @($pg[$pgcount].friendlyname)

write-host $pgcount -background green

(Get-TapeBackupOption $pg[$pgcount]).RetentionPolicy | out-file policyretention.txt

(Get-TapeBackupOption $pg[$pgcount]).OffsiteEncryption | out-file policyretention.txt -append

write-host "policyretention.txt" -foreground green

type policyretention.txt

$pgcountinnerloop = 0

while ($pgcountinnerloop -ne $pg.count)

{

write-host $pgcountinnerloop -background yellow

if ($pgcount -eq $pgcountinnerloop) {$pgcountinnerloop++}

(Get-TapeBackupOption $pg[$pgcountinnerloop]).RetentionPolicy | out-file policyretention1.txt

(Get-TapeBackupOption $pg[$pgcountinnerloop]).OffsiteEncryption | out-file policyretention1.txt -append

write-host "policyretention1.txt" -foreground green

type policyretention1.txt

$compare = Compare-Object -ReferenceObject $(get-content policyretention.txt) -DifferenceObject $(Get-content policyretention1.txt)

if ($compare.length -eq $null)

{

if ($pgcountinnerloop -lt $pgcount)

{

Break

}

else

{

$collocation = $collocation + $pg[$pgcountinnerloop].friendlyname

$collocation

write-host "done"

$collocationcount++

}

}

$pgcountinnerloop++

}

if ($collocation.count -gt 1)

{

"-----------------------------------------------------------" | out-file longterm.txt -append

"Protection Groups that can share the same tape based on recovery goals/Encryption:" | out-file longterm.txt -append

" " | out-file longterm.txt -append

write-host $collocation

foreach ($collocation1 in $collocation)

{

$collocation1 | out-file longterm.txt -append

}

}

$pgcount++

}

Referencing the below Technet articles, after you enable tape co-location, DPM has two configurable options [ TapeWritePeriodRatio and TapeExpiryTolerance ] that impact when a tape gets marked offsite ready and if a tape will be used for another backup job if not yet marked offsite ready.

Enabling tape Co-Location:

DPM 2007 -

http://technet.microsoft.com/en-us/library/cc964296.aspx

DPM 2010 -

http://technet.microsoft.com/en-us/library/ff399230.aspx

When tape colocation is enabled, a tape will be shown as Offsite Ready when any one of the following conditions is met:

- The tape is full or is marked full.

(This includes the I/O 0x8007045D error problem described above.)

- One of the datasets has expired.

- Write-period ratio has been crossed.

(By default, this is the first backup time + 15 percent of the retention range.)

When a tape is marked offsite ready, no additional data sets will be written to that tape until ALL recovery points expire. Once a tape is marked as expired, DPM will show the tape as expired in the DPM console and can overwrite the tape during subsequent backups. DPM will always favor a free tape over an expired tape when it searches for a tape to use if a new tape is required.

NOTE If DPM library sharing is enabled, by default only the DPM server that initially wrote to that tape can re-use it unless you manually free the expired tape. Once the tape is marked as free, then any DPM server sharing the library will be able to use that free tape.

TapeWritePeriodRatio - This is a DPM Global property that can be set only when colocation is enabled and indicates the number of days for which data can be written on to a tape as a percentage of the retention period of the first data set written to the tape. This is a global setting and affects all protection groups.

TapeWritePeriodRatio value can be between 0.0 to 1.0 the default value is 0.15 (i.e. 15%)

NOTE DPM 2012 does not have this global property and instead has additional configuration options in the GUI to allow different write periods for different protection groups. This adds greater flexibility in determining how long you want to use a tape at the protection group(s) level.

As an example on the impact of the TapeWritePeriodRatio setting having a default of 15% - if you have a protection group doing daily backups, with a retention period of 2 weeks (14 days), the tape will be marked offsite ready after only 2.1 days regardless of how much / little data was written to the tape. If you desire DPM to write to the tape for a week, you would need to change the TapeWritePeriodRatio to 50% using the DPM power shell command below.

Set-DPMGlobalProperty –DPMServerName <dpm server name> -TapeWritePeriodRatio .5

TapeExpiryTolerance - This is a registry setting and indicates the time window within which the expiry date of the next dataset to be written to the tape must fall. It is expressed as a percentage. The default value is 17 percent if the registry is not present.

This is a DWORD type registry value located under HKLM\Software\Microsoft\Microsoft Data Protection Manager\1.0\Colocation. DPM does not create the CoLocation key automatically. You must manually create the Colocation key then make a new TapeExpiryTolerance value to set it.

There is a misconception that the tape co-location feature will only co-locate data sets for the same recovery goals onto the same tape. IE: A weekly backup will never co-locate on a tape that has Monthly backups already written. That is not correct as DPM will evaluate each tape that is not marked offsite ready and see if the data set about to be written will meet the following check. It does this to help meet the goal of fully utilizing tapes without preventing the tape from expiring on time. If you adjust the TapeExpiryTolerance too high (make it 100%) then you run the risk of placing shorter retention data sets on tapes with a longer retention goal and risk having the tape marked offsite ready prematurely, which defeats the goal fully utilizing tapes. Generally speaking, setting this to 60 will provide a good benefit and should not cause any problems.

Let, Furthest expiry date among the expiry dates of all the Datasets already on the tape = FurthExpDate

Time Window =

FurthExpDate - TapeExpiryTolerance * (FurthExpDate –today’s date) (Lower Bound)

FurthExpDate + TapeExpiryTolerance * (FurthExpDate –today’s date) (Upper Bound)

So, the current dataset will be co-located on the given tape only if its expiry date falls within Time Window (both bounds inclusive)

Using the same example we used before, you have a protection group doing daily backups, with a retention period of 2 weeks (14 days), and you set the TapeWritePeriodRatio to .5 (50%) because you want DPM to use the tape for 1 week. But with the default TapeExpiryTolerance of 17%, you may not achieve that if one backup fails for any reason and you miss a day's backup.

In the below example, I have set the TapeWritePeriodRatio to .50 and the first backup set was written to the tape on 5/7. Notice offsite ready will not be set until after the 7 days as desired based on the 50% TapeWritePeriodRatio setting. The last backup set was written on 5/9 and the next backup set to be written is 5/11 due to a server problem on 5/10 that prevented that day's backup from occurring. With the default 17% TapeExpiryTolerance window, the next dataset expiry date does not fall between the lower and upper bounds. This would result in DPM picking a new tape for the next backup set and since the TapeExpiryPeriodRatio has not been crossed and no recovery point is expired, however that tape would not be marked offsite ready. This is an example of why a tape will not be used for any additional backups, yet there is no visual indication, so it makes you question why DPM is using more tapes than necessary.

Now, changing nothing more than the TapeExpiryTolerance from 17% to 60%, notice how that time window has expanded and allows the next data set on 5/11 to be written on that tape.

I have shared this Excel spreadsheet to help you calculate offsite ready at the following site: http://1drv.ms/1vKNy5y

In conclusion, I hope this explains what you may have experienced and helps you configure your DPM server so it can fully utilize your tapes during future backups.

Mike Jacquet | Senior Support Escalation Engineer | Microsoft GBS Management and Security Division

Get the latest System Center news on Facebook and Twitter :

App-V Team blog:

http://blogs.technet.com/appv/

ConfigMgr Support Team blog:

http://blogs.technet.com/configurationmgr/

DPM Team blog:

http://blogs.technet.com/dpm/

MED-V Team blog:

http://blogs.technet.com/medv/

Orchestrator Support Team blog:

http://blogs.technet.com/b/orchestrator/

Operations Manager Team blog:

http://blogs.technet.com/momteam/

SCVMM Team blog:

http://blogs.technet.com/scvmm

Server App-V Team blog:

http://blogs.technet.com/b/serverappv

Service Manager Team blog:

http://blogs.technet.com/b/servicemanager

System Center Essentials Team blog:

http://blogs.technet.com/b/systemcenteressentials

WSUS Support Team blog:

http://blogs.technet.com/sus/

The Forefront Server Protection blog:

http://blogs.technet.com/b/fss/

The Forefront Endpoint Security blog :

http://blogs.technet.com/b/clientsecurity/

The Forefront Identity Manager blog :

http://blogs.msdn.com/b/ms-identity-support/

The Forefront TMG blog:

http://blogs.technet.com/b/isablog/

The Forefront UAG blog:

http://blogs.technet.com/b/edgeaccessblog/