http://azure.microsoft.com/en-us/services/machine-learning enables businesses to perform cloud-based predictive analytics to understand what their data means. Machine learning algorithms learn from data. It is critical that you feed them the right data for the problem you want to solve. Such data can be business transactional data or sensitive business data that is either on-premises or on the cloud. Also, even if you have good data, you need to make sure that it is in a useful scale and format. The more disciplined you are in handling of data, the more consistent and better results you are like likely to achieve. Therefore, data preparation process is critical for machine learning. In general, data preparation can be summarized into three steps:

- Select Data

- Preprocess Data

- Transform Data

Today, https://studio.azureml.net/ already provide ways for you to https://azure.microsoft.com/en-us/documentation/articles/machine-learning-import-data/ , http://azure.microsoft.com/en-us/documentation/videos/preprocessing-data-in-azure-ml-studio/ and https://azure.microsoft.com/en-us/documentation/articles/machine-learning-feature-selection-and-engineering/ .

In this blog, we will show you how you can unleash the power of https://msdn.microsoft.com/en-us/library/ms141026(v=sql.120).aspx (SSIS) with https://msdn.microsoft.com/en-US/library/mt146770.aspx in your data preparation process for Azure Machine Learning, allowing you to select more data sources, providing more options for your data preprocessing and transformation.

Select Data

Many businesses have multiple different data sources within their IT infrastructure. Some data is in relational databases such as SQL Server, Oracle or Teradata etc. while some is on files like Excel, XML, flat files or azure blob files in the cloud. Selecting a subset of all available data that is relevant to your machine learning algorithms is a key step in the data preparation process. https://msdn.microsoft.com/en-us/library/ms141026.aspx (SSIS) provides many source connectivity such as https://msdn.microsoft.com/en-US/library/mt146775(v=sql.120).aspx , https://msdn.microsoft.com/en-US/library/hh758687(v=sql.120).aspx , https://msdn.microsoft.com/en-US/library/ms141696(v=sql.120).aspx , https://msdn.microsoft.com/en-US/library/ms137897(v=sql.120).aspx , https://msdn.microsoft.com/en-US/library/ms141683(v=sql.120).aspx , https://msdn.microsoft.com/en-US/library/ms139941(v=sql.120).aspx , https://www.microsoft.com/en-us/download/details.aspx?id=44582 , https://msdn.microsoft.com/en-US/library/dn535517(v=sql.120).aspx and https://msdn.microsoft.com/en-us/library/ms140080(v=sql.120).aspx for you to connect to different data sources and select the subset of available data.

Preprocess Data

After you have selected your data, your next step is to preprocess the data and get the selected data into the state that you can work with. Usually, the data preprocessing steps are formatting, cleansing, and sampling.

- Formatting – Data from various sources may not be in a format that is suitable and consistent for you to work with. You can use the https://msdn.microsoft.com/en-us/library/ms140080(v=sql.120).aspx components to pull data from both SQL Server and Oracle database, manipulate it using https://msdn.microsoft.com/en-us/library/ms141713(v=sql.120).aspx , then serialize it into certain file format like CSV and store it in the Azure Blob Storage using the https://msdn.microsoft.com/en-US/library/mt146772(v=sql.120).aspx where Azure Machine Learning can consume.

- Cleansing – Cleansing not only refers to fixing missing/bad data, but it can also refers to anonymizing and removing sensitive information such as personal identification information (PII) data. You can use the https://msdn.microsoft.com/en-US/library/ee677619(v=sql.120).aspx , https://msdn.microsoft.com/en-us/library/ms137640(v=sql.120).aspx and/or other https://msdn.microsoft.com/en-us/library/ms141713(v=sql.120).aspx to correct data or to perform PII data masking and removal.

- Sampling – It is common that you may have far more selected data available than you need for your machine learning algorithms. More data can result in much longer running time and computational requirements. Sometimes it’s better to take a smaller sample of the selected data for your machine learning process to enable faster prototype and testing of your solution. In the SSIS https://msdn.microsoft.com/en-us/library/ms140080(v=sql.120).aspx , you can add filter in the source components, and/or use transformation components such as http://msdn.microsoft.com/query/dev10.query?appId=Dev10IDEF1&l=en-US&k=k(sql11.dts.designer.conditionalsplittrans.f1)&rd=true to select smaller set of data.

Transform Data

After you have preprocessed the data, the last step you would consider is to transform it. Machine learning algorithm are only as good as the data that is used to train them. Good training data is usually in a form that is optimized for learning and generalization. The process of putting together the data in this optimal format is known in the industry as feature transformation and there are commonly 3 types:

- Scaling – The preprocessed data may contains attributes with mixtures of scales for different quantities such as volume, kilograms or currency signs. You can use https://msdn.microsoft.com/en-us/library/ms141713(v=sql.120).aspx to transform and standardize your data in the same scale.

- Decomposition – Sometimes it may be useful to split the data into constituent parts for machine learning training. For example, You can use https://msdn.microsoft.com/en-us/library/ms141713(v=sql.120).aspx and/or https://msdn.microsoft.com/en-us/library/ms137640(v=sql.120).aspx to split the address “One Microsoft Way, Redmond, WA, 98052” to create additional features like “Address” (One Microsoft Way), “City” (Redmond), “State” (WA) and “Zip” (98052) such that the learning algorithm can group more disparate transactions together, and discover broader patterns – perhaps some merchant zip codes experience more fraudulent activity than others.

- Aggregation – on the other hand, sometimes it may be more useful to aggregate data to make it more meaningful for your machine learning algorithms. For example there may be data instances for each transactional time a credit card is swiped that could be aggregated into the count for the number of transaction made and you can use https://msdn.microsoft.com/en-us/library/ms141713(v=sql.120).aspx and/or https://msdn.microsoft.com/en-us/library/ms137640(v=sql.120).aspx to achieve that as well.

Example: Using SSIS to prepare data for a Credit Cardholder purchase behavior prediction

Build the SSIS Data Flow for data preparation

Assume that you are working with a Cluster Model ( https://azure.microsoft.com/en-gb/documentation/articles/machine-learning-r-csharp-cluster-model/ about building the Cluster Model in Azure Machine Learning) to predict behaviors of credit cardholders, the model needs to be trained with data of credit card transactions, which is stored in an on-premises SQL Server database.

To prepare the training data, you can https://msdn.microsoft.com/en-us/library/ms169917(v=sql.120).aspx and design its https://msdn.microsoft.com/en-us/library/ms140080(v=sql.120).aspx using following components:

1) https://msdn.microsoft.com/en-us/library/ms141696(v=sql.120).aspx : extract the “Customer”, “Credit Card”, and “Transaction” tables from SQL Server.

2) https://msdn.microsoft.com/en-us/library/ms141775(v=sql.120).aspx : join data in the three tables to combine the columns.

3) https://msdn.microsoft.com/en-us/library/ms137886(v=sql.120).aspx : filter out the expired data by applying conditions.

4) https://msdn.microsoft.com/en-us/library/ms137640(v=sql.120).aspx : run .Net (C#/VB) code to perform specific transformation on the data. In this case, mask the column which contains customer PII data.

5) https://msdn.microsoft.com/en-US/library/mt146772.aspx : load the integrated, filtered and masked data into Azure Blob Storage, which will be ready for Azure Machine Learning to consume.

As next step, you can https://msdn.microsoft.com/en-us/library/hh231102(v=sql.120).aspx , and schedule its execution using SQL Agent. It will provide you an operational workflow of preparing data to train the Machine Learning model.

Consume prepared data in Machine Learning experiment

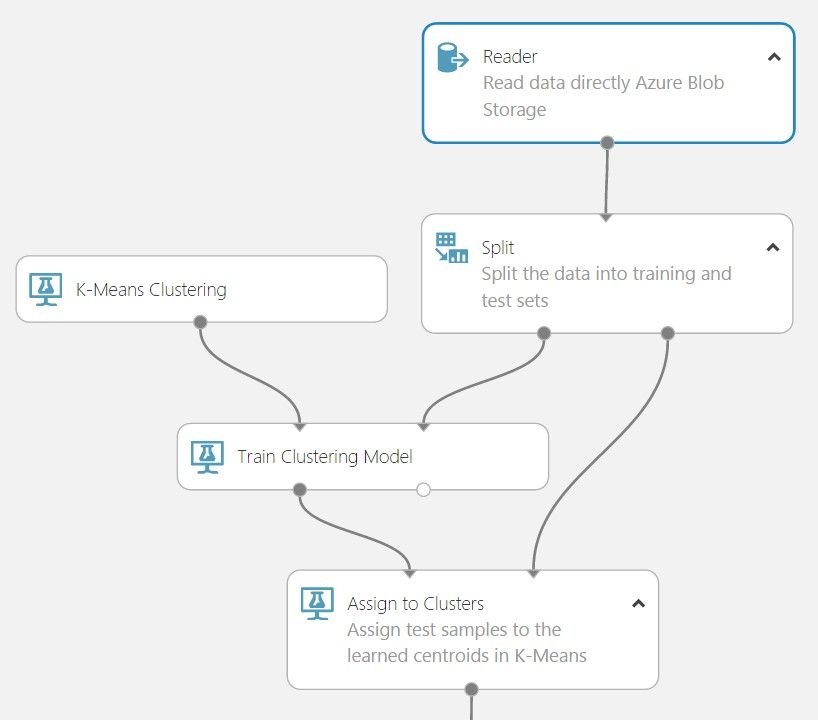

Once the SSIS package has uploaded the prepared data to Azure Blob Storage, you can use the https://msdn.microsoft.com/library/azure/dn905997#bkmk_AzureBlob in Machine Learning Studio to load the data into an experiment. Reader uses this data to create a dataset that can be connected to any other module that supports a dataset on the input port.

Summary

Building an advanced analytics data pipeline with machine learning capabilities is just like building LEGO, you can build it with different patterns and possibilities. Now you can use both the https://studio.azureml.net/ and/or https://msdn.microsoft.com/en-us/library/ms141026(v=sql.120).aspx to perform various steps within the data preparation process. Specifically, https://msdn.microsoft.com/en-us/library/ms141026(v=sql.120).aspx can be your on-premises data preparation solution to connect, select, preprocess and transform data on-premises first before moving the data to Azure for your machine learning experiment!

Try it yourself

To build and run the SSIS package, please download and install:

https://www.microsoft.com/en-us/download/details.aspx?id=42313

http://www.microsoft.com/en-us/download/details.aspx?id=47366

Related document:

http://blogs.msdn.com/b/ssis/archive/2015/06/25/doing-more-with-sql-server-integration-services-feature-pack-for-azure.aspx