- Home

- Security, Compliance, and Identity

- Security, Compliance, and Identity Blog

- How Microsoft Measures Effectiveness of Malware & Phish Catch for Office 365

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

At Ignite, Microsoft reasserted its focus on cybersecurity across three key themes: security operations for you, enterprise-class technology, and driving partnerships for a heterogenous world. Office 365 threat protection capabilities are built on these themes, with services such as Threat Intelligence supporting SecOps teams, a threat protection solution securing many of today’s enterprises, and working with an ecosystem of partners to help ensure the best security possible for our customers.

Since launching Office 365 Exchange Online Protection (EOP) and Advanced Threat Protection (ATP), we have continuously made significant enhancements across anti-phish capabilities, reporting, and perhaps most importantly, effectiveness in malware and phish catch. To this end, we have reported a >99.9% average malware catch rate, and the lowest miss rate of phish emails reported amongst other security vendors for Office 365. However, every organization claims to be the ‘best’ at catching malware and phish, yet to our knowledge, none delve into the process and analysis for measuring their effectiveness as Microsoft does in this blog. One of Microsoft’s guiding principles and pillars of Office 365 is trust (figure 1). We believe it is important to share our measurement criteria for effectiveness with customers to provide understanding on how our impressive catch rates are determined.

The Unique Value of Microsoft

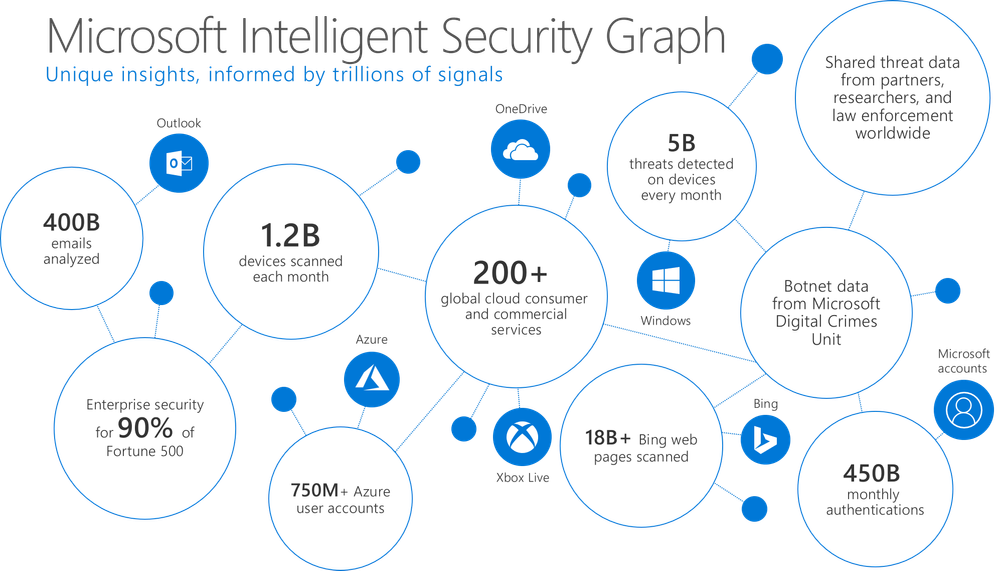

Office 365 EOP/ATP’s rich and growing set of features and capabilities provide the best security for your Office 365 environment, and its strength lies in the connection to the broader Microsoft ecosystem. With seamless integration to the Microsoft Intelligent Security Graph, Office 365 EOP/ATP accesses 6.5 trillion correlated signals every day, to power its filters, ML models, and threat updates as (figure 2).

These signals are harnessed from Microsoft’s ecosystem of services and partners, including threat data from endpoints, identities, infrastructure, user data, and cloud applications as shown in figure 3. The ability to see such a large volume of attack campaigns coupled with rapid design updates on capabilities to mitigate evolving threats, helps ensure unparalleled effectiveness in both malware and phish catch. Microsoft leverages the collective efforts of 3500 in-house security professionals and an annual $1 billion investment in cybersecurity to drive rapid resolutions to the latest attack campaigns. The Microsoft Intelligent Security Graph also integrates our threat protection services (including Office 365 ATP), providing seamless protection across the entire modern workplace. The integration utilizes shared signal between services, ensuring threat resolutions are communicated across these services, securing threat vectors, no matter where an attack originates. Very few security vendors offer the breadth, depth, and intelligence of Microsoft.

Behind the Numbers

Our unique value ultimately manifests in our effectiveness for mitigating malware and phish and we’d like to share how we measure the effectiveness of the Office 365 threat protection services. Office 365 EOP and ATP are complementary and are integrated into the mail transport pipeline, filtering email in real time as it gets delivered to hosted Office 365 mailboxes. The most common strategy for other email security providers is having customers point their MX record to the competing cloud service, filtering the mail and then sending to Office 365. Since we use IP addresses as one attribute to filter at the network edge, Office 365 can classify emails that are pre-filtered by a competing cloud service. Any of these emails which we flag as malicious provides visibility into what our competitors miss. Thus, while we cannot determine what competitors catch, we have very accurate visibility into what competitors miss (figure 4). In some scenarios, we can share data which shows our customers the malicious emails being missed by competitive filters prior to arriving at the Office 365 filters. Ask your account rep if this analysis can be provided for your organization .

Measuring Malware and Phish Catch Effectiveness

Effectiveness implies catching malicious content while not blocking benign content. Missing malicious content is a false negative (FN), blocking benign content is a false positive (FP). Malware is any file executing malicious code generally arriving as an email attachment. In fact, ATP’s malware policy setting is named ‘Safe Attachments’. When attachments are filtered by EOP and ATP, Microsoft captures a unique hash for each file and a polymorphic hash of the active element in the file. If the file is determined malicious, we look through historical mail flow to find any other instances of the malicious hashes across EOP and ATP that were initially missed. This process is known as automated label expansion, where our ML technology can label the 0.1% of emails we do miss as malicious after we have determined an instance of that email to have a malicious hash. Attachments are continually scanned for a period of 7 days after delivery, helping ensure that any new learning is applied to our historic labeling and any missed malware is immediately flagged and removed, a feature which those familiar with EOP, know as zero hour auto-purge (ZAP). End users and admins can also submit suspicious emails to Microsoft with the former leveraging the Report-Message plugin and the latter directly emailing Microsoft the suspicious emails. All user or admin submissions reviewed by our analysts are also scanned through our entire filtering pipeline including Safe . Analysts also review the automated labeling expansion for correctness, helping provide human generated verdicts for further machine learning training, manually updating the labeling when necessary. Layered onto these techniques is Internal and external intelligence data sources to identify potential FN’s. Advanced hunting telemetry and hunting ML is used to detect suspicious behaviors and delivery patterns to help prioritize our analyst’s FN hunting priority.

Phishing emails are the other major attack method, arriving in many forms. Phish catch measurement techniques are similar, but do not leverage file hashes or polymopohic hashes for label expansion, instead relying on header meta-data, message fingerprints, and URL’s as expansion keys. The several components we analyze to determine our effectives is summarized in figure 5.

The Pitfalls of 3rd Party Testing

Often 3rd party testing done by vendors is incomplete in its assessment of the full end to end service. These tests at times can provide guidance on the performance of a particular service and how it compares with peers. However, there are often gaps in the testing that can misconstrue results. The main gaps from 3rd party testing are:

- How to count a ‘miss’

- Misconfiguring the solution

- 3rd Party testing does not measure many aspects of the email security stack

Office 365 EOP/ATP counts emails sent to ‘junk’ as a catch. We have seen in numerous reports where our service is often rated below peer solutions because phish mails going to ‘junk’ are considered a miss by our system. Email is private, and we will not delete or remove an email unless it is an explicit policy. Our default policy is to ‘junk’ phish, spam, or bulk emails. In our service, these are not counted as ‘miss’, yet in many 3rd party tests, we are flagged for emails which are sent to junk. Another important issue to consider is the configuration of the service. Every service is different, with settings in one service having a nuanced effect in a different service. We see this often, as many customers today are migrating from a legacy solution to our Office 365 EOP/ATP. These customers often will create the same set of Exchange Transport Rules (ETRs) for EOP/ATP that were present in their legacy solution. However, there is no simple 1:1 correlation between the rules in another service versus EOP/ATP. We often work closely with our customers to help with the initial configuration of their EOP/ATP. If you are seeing results which seem to bely the statistics we share publicly, please reach out to support so they can dig deeper into your configuration settings. Often, simple fixes to your settings will eliminate the issues you are experiencing.

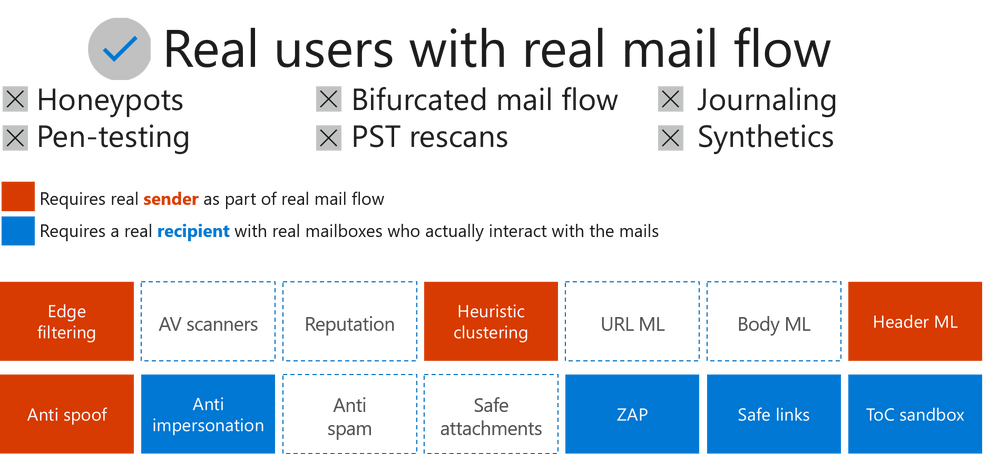

The final gap we see in 3rd party testing is only seeing a partial test of comprehensive email security. In most synthetic tests, a majority of the capabilities (figure 6) are not tested. Many of the components that are part of the mailflow such as ‘Edge Filtering’ and authentication checks are not included in the testing. Office 365 blocks many malicious emails with its ‘Edge Filters’ and authentication checks. Much of the email protection is also done once the email lands in the inbox. Third party tests do not account for these post-delivery protection capabilities such as ZAP, time of click protection with Safe Links, or the time of click detonation of links. A true test of the quality and effectiveness of the solution must include all of these components to fairly assess and compare solutions. Also, as we noted in figure 6, many of these components can only be tested in real mail flow as they require a sender or recipient. If you want to compare Office 365 ATP with a competitive vendor, talk to you rep on methods to bifurcate real mail flow so you can see how we compare with other vendors in a real world test.

Learn More

We hope this article helped you understand more on how we measure effectiveness for Office 365 EOP/ATP. Please be sure to check out the Ignite session where we give more details. Your feedback enables us to continue improving and adding features that will continue to make Office ATP the premiere advanced security service for Office 365. If you have not tried Office 365 ATP for your organization yet, you should begin a free Office 365 E5 trial today and start securing your organization from the modern threat landscape.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.