- Home

- Azure

- Microsoft Developer Community Blog

- Deploying Kubernetes Cluster on Azure VMs using kubeadm, CNI and containerd

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Although managed Kubernetes clusters are great, ready for production, and secure, they hide most of the administrative operations. I remember the days I was working with Kubernetes the hard way repository to deploy on Azure Virtual Machines and I was thinking that there should be an easier way to deploy your cluster. One of the well-known methods is kubeadm which is announced at 2018. Since then, it is part of the Kubernetes and has its own GitHub page.

When we were studying for Kubernetes exams with Toros (@torosgo) we wanted to deploy our Kubernetes to practice on some scenarios, especially if you are preparing for CKA and CKS, you will need your cluster to play with the Control Plane nodes and components. Kubeadm is the suggested way to deploy Kubernetes, but we faced some challenges, and we invested some time to learn the kubeadm tool.

We split our workload and investigated some part of the kubeadm tool. To keep each other up to date, we put our findings in a GitHub repo. After we passed the exams, we wanted to tidy up, create some installation scripts and share this with the community. We updated the GitHub repository and added some how-to documents to make it ready to deploy the Kubernetes cluster with kubeadm. Here is the GitHub link for the repository.

Let us dive in. This cluster will be deployed in a VNet and a Subnet using 2 VMs – one Control plane and one worker node and deploying Container Runtime, kubeadm, kubelet, and kubectl to all nodes and will add a basic CNI plugin. We prepared all the scripts, it’s ready to go, to run these scripts, you will need:

- Azure Subscription

- A Linux Shell - bash, zsh etc (it could be Azure Cloud Shell (https://shell.azure.com/))

- Azure CLI

- SSH client – which will be coming with your Linux distro (or Cloud Shell)…

What we will do as step by step;

- Define the variables that we will use when we run the scripts

- Create Resource Group

- Create VNet and Subnet and NSG

- Create VMs

- Prepare the Control plane – install kubeadm, kubelet

- Deploy the CNI plug in

- Prepare the Worker Node – install kubeadm and join the control plane.

Step 1, 2, 3, and 4 is inside the code snippet of the document, which you can find here. This part is straightforward, easy to follow, and mostly Azure CLI related, so we will not detail it here, but I would like to clarify some of the parts.

When creating the VMs, we add our SSH public key on our local to these two VMs to not deal with passwords and access those VMs securely, easily. This is the line of code while creating VMs,

--ssh-key-value @~/.ssh/id_rsa.pub \

The other part that we would like to clarify is, accessing the VMs using SSH, as we mentioned in the doc, we have three options, and we can pick the one that makes more sense. One is manually enabling NSG with our IP address, the second is enabling JIT access, and the last option is using Azure Bastion service. The next step is preparing the Control plane. First, we need the IP of the VM to make an SSH connection. This command will give you the IP address of the Control Plane,

export Kcontrolplaneip=$(az vm list-ip-addresses -g $Kname -n kube-controlplane \--query "[].virtualMachine.network.publicIpAddresses[0].ipAddress" --output tsv)

We run a similar command to retrieve the Worker node’s IP

export Kworkerip=$(az vm list-ip-addresses -g $Kname -n kube-worker \--query "[].virtualMachine.network.publicIpAddresses[0].ipAddress" --output tsv)

Let us prepare the VMs. This part will be the same with both VMs so I will not repeat again. Since we will be using the kubeadm version 1.24.1 we are setting our variables.

export Kver='1.24.1-00'

export Kvers='1.24.1'

The next part is preparing the VMs Networking and setting up containerd.

sudo -- sh -c "echo $(hostname -i) $(hostname) >> /etc/hosts"

sudo sed -i "/swap/s/^/#/" /etc/fstab

sudo swapoff -a

We are setting up sysctl parameters and lastly applying these new configs.

cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

overlay

br_netfilter

EOF

sudo modprobe overlay

sudo modprobe br_netfilter

cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

net.ipv4.ip_forward = 1

EOF

sudo sysctl --system

After running sysctl you will see some messages about modifying some of the kernel parameters.

We are continuing to update the node and installing some necessary tools with containerd.

sudo apt update

sudo apt upgrade -y

sudo apt -y install ebtables ethtool apt-transport-https ca-certificates curl gnupg containerd

sudo mkdir -p /etc/containerd

sudo containerd config default | sudo tee /etc/containerd/config.toml

sudo systemctl restart containerd

sudo systemctl enable containerd

sudo systemctl status containerd

And then we are installing kubeadm, kubelet, kubectl on both of the VMs, again,

sudo curl -fsSLo /usr/share/keyrings/kubernetes-archive-keyring.gpg https://packages.cloud.google.com/apt/doc/apt-key.gpg

echo "deb [signed-by=/usr/share/keyrings/kubernetes-archive-keyring.gpg] https://apt.kubernetes.io/ kubernetes-xenial main" | sudo tee /etc/apt/sources.list.d/kubernetes.list

sudo apt update

sudo apt -y install kubelet=$Kver kubeadm=$Kver kubectl=$Kver

sudo apt-mark hold kubelet kubeadm kubectl

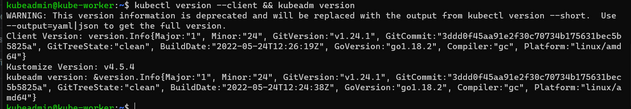

kubectl version --client && kubeadm version

You should be seeing after the install like this result,

As I mentioned above kubeadm configuration needs to be applied to both VMs, the next section is specifically for Control Plane, which initializes kubeadm as a control plane node.

sudo kubeadm init --pod-network-cidr 10.244.0.0/16 --kubernetes-version=v$Kvers

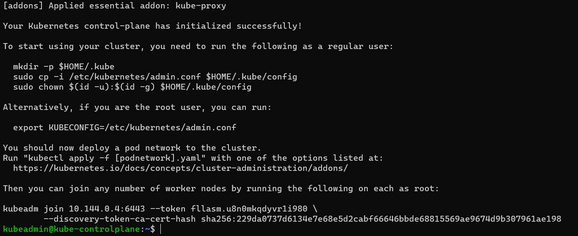

If everything goes well, this command will return some information about how to configure your kubeconfig (which we will do a little later), an example in case you want to add another Control Plane node, and an example of adding worker nodes. We save the returned output to a safe place to use later in our worker node setup.

sudo kubeadm join 10.144.0.4:6443 --token gmmmmm.bpar12kc0uc66666 --discovery-token-ca-cert-hash sha256:5aaaaaa64898565035065f23be4494825df0afd7abcf2faaaaaaaaaaaaaaaaaa

If we lose or forget to save the above output, then generate a new token with the below command on the control plane node to use on the worker node later.

sudo kubeadm token create --print-join-command

Lastly, we are creating the kubeconfig file so that our kubectl works.

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

This set of commands should make the node as Control Plane, install etcd (the cluster database), and the API Server (with which the kubectl command-line tool communicates). Lastly, on the Control plane, we will install the CNI Plugin, if you remember when we initialize the Control Plane we defined the Pods network IP block with this parameter,

--pod-network-cidr 10.244.0.0/16

This parameter is related to the CNI plugin. Every plug-in has it’s own configuration, so if you are planning to use a different CNI, make sure you put related parameters when you build your Control Plane. We will be using WeaveWorks’ plugin

kubectl apply -f "https://cloud.weave.works/k8s/net?k8s-version=$(kubectl version | base64 | tr -d '\n')"

We are done with the Control Plane node, now we will get ready for our worker node. All we have to do is execute that kubeadm join command with the correct parameters. As we mentioned earlier, if you have lost that command, you can easily get from the Control Plane node again by running this command:

sudo kubeadm token create --print-join-command

If everything goes well, you should be able to run the below kubectl commands:

kubectl get nodes

kubectl get pods

kubectl get pods -w

Please let us know if you have any comments or questions.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.