Introduction

In Exchange support we see a wide range of support issues. Few of them can be more difficult to troubleshoot than performance issues. Part of the reason for that is the ambiguity of the term "Performance Issue". This can manifest itself like anything from random client disconnects to database failovers or slow mobile device syncing. One of the most common performance issues we see are ones where the CPU is running higher than expected. "High CPU" can also be a bit of an ambiguous term as well. What exactly is high? How long does it occur? When does it occur? All of these are questions that have to be answered before you can really start getting to the cause of the issue. For example, say you consider ‘high’ to be 75% of CPU utilization during the day. Are you experiencing a problem, are databases inadequately balanced, or is the server just undersized? What about a 100% CPU condition? Does it happen for 10 seconds at a time or 10 minutes at a time? Does it only happen when clients first logon in the morning or after a failover? In this article I'll go into some common causes of high CPU utilization issues in Exchange 2013 and how to troubleshoot them. At this point I should note that this article is about Exchange 2013 specifically, not earlier versions. High CPU issues across versions do have some things in common, however much of the data in this article is specific to Exchange 2013. There are some fairly significant differences between Exchange 2010 and Exchange 2013 that change the best practices and troubleshooting methodology. Some of these include completely different megacycle requirements, different versions of the .NET Framework, and different implementation of .NET Garbage Collection. Therefore, I will not be covering Exchange 2010 in this post.Common Configuration Issues

Those of us that have worked enough performance issues start by following a list of things to check first. This was actually the main motivation for a TechNet article we recently published called Exchange Server 2013 Sizing and Configuration Recommendations. I'm not going to duplicate everything in the article here, I would suggest that you read if you are interested in this topic. I will however touch on a few of the high points. .NET Framework version Exchange 2013 runs on version 4.5 of the .NET Framework. The .NET team has published updates to .NET 4.5, released as versions 4.5.1 and 4.5.2. All of these versions are supported on Exchange 2013. However, I would strongly recommend that 4.5.2 be the default choice for any Exchange 2013 installation unless you have very specific reasons not to use it. There have been multiple performance related fixes from version to version, some of which impact Exchange 2013 fairly heavily. We've seen more than a few of these in support. You can save yourself a lot of trouble by upgrading to 4.5.2 as soon as possible, if you are not already there. It should also be noted that 4.5.2 is the latest version as of the publishing of this blog post. Future releases will contain even more improvements so be sure to always check for the latest available version. You can read more about the different versions of the .NET Framework here. Power Management I started losing count a while back of the number of high CPU cases I encountered that were caused by misconfigured power management. Power management sounds like a good thing, right? In many cases it is. Power management allows the hardware or the OS to, among other things, throttle power to the CPU and turn off an idle network card when it isn't in use. On workstations and perhaps on certain servers this can be a good thing. It saves power, lowers the electric bill, gives you a nice low carbon footprint, and makes vegetables taste good. So why is this a bad thing? Consider this. You have a server running at about 80% CPU throughout the work day consistently. You've ran the sizing numbers over and over and you should be closer to 55%. You don't see any unusual client activity. Everything looks great except the CPU utilization. Now what if you were to find out that your 2.4GHz cores are only operating at 1.2GHz most of the time? That might make a difference in your reported CPU utilization. For Exchange the guidance is straight forward. If hardware power management is an option, don't use it. You should allow the operating system to manage power and you should always use the "High performance" power plan in Windows. Even if you aren't using hardware based power management, just having the power plan set to the default "Balanced" can be enough to throttle the CPU power. How do you know if this is happening? On a physical server the answer is easy. There is a counter in performance monitor called "Processor Information(_Total)\% of Maximum Frequency". This should always be at 100. Anything lower indicates that the CPU is being throttled which is usually a result of some kind of power management, either at the hardware or OS level. On a virtual server things get a bit more complicated. To the Exchange server, a VM guest, it is difficult to completely trust the CPU performance numbers. If power is being throttled at the VM Host layer, it will not be overly apparent to the Guest OS. You need to use the performance monitoring tools of the VM Host to check for processor power throttling. Screenshot of CPU throttling in Perfmon: Health Checker

We've recently published a PowerShell script here that makes checking for common configuration issues easy. The script reports Hardware/Processor information, NIC settings, Power plan, Pagefile settings, .NET Framework version, and some other items. It also has a Client Access load balancing check (current connections per server) and a Mailbox Report (active/passive database and mailbox total per server). It can be executed remotely and can run against all servers in the Organization at once, to save the trouble of having to check all of these settings individually on each server.

Health Checker

We've recently published a PowerShell script here that makes checking for common configuration issues easy. The script reports Hardware/Processor information, NIC settings, Power plan, Pagefile settings, .NET Framework version, and some other items. It also has a Client Access load balancing check (current connections per server) and a Mailbox Report (active/passive database and mailbox total per server). It can be executed remotely and can run against all servers in the Organization at once, to save the trouble of having to check all of these settings individually on each server.

Sizing

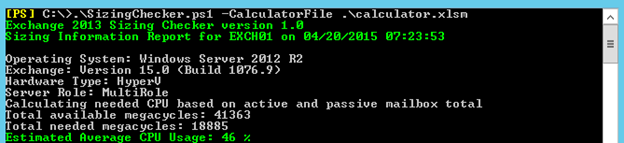

After we've ruled out the common causes from the previous section, we now have to move on to sizing. Perhaps the CPU is running high because the server doesn't have enough megacycles to keep up with the load being placed on it. Sizing Exchange 2013 is covered in multiple blog posts.. If you want a good understanding of sizing, I suggest reading Jeff Mealiffe’s post Ask the Perf Guy: Sizing Exchange 2013 Deployments. If you haven't done it already, you should also run through Ross Smith IV's sizing calculator. Most deployments have utilized the calculator for planning and sizing. I'm a support guy so I'm approaching this topic from the angle of troubleshooting an existing environment. In the world of troubleshooting we don’t need to size and plan a deployment, but we do need to know enough about it to know if a performance problem is simply an issue of being undersized. Troubleshooting a high CPU issue with no knowledge of sizing can at best be difficult and many times just not possible. When it comes to CPU sizing it comes down to this question - do I have enough available megacycles to handle the load? Easy enough right? Not quite. How many available megacycles you have is fairly straight forward, although it does require a bit of math. The basic formula (taken directly from Jeff's sizing blog) is as follows: Version 7.2 of the Sizing Calculator also allows us to get an idea of the expected CPU utilization. The difference is it will calculate expected CPU utilization based on the number of active and passive mailboxes planned by taking the values from the Input page of the spreadsheet (as opposed to querying the mailbox server for a current total). The new features in version 7.2 provide insight that lets you know what to expect from a CPU utilization standpoint in multiple different scenarios that include Normal Runtime (no failures, evenly distributed databases), Single Failure (a single server in the datacenter has failed, resulting in database copy activation), Double Failure (two servers in the datacenter have failed, resulting in database copy activation), Site Failure (a datacenter has failed, requiring failover to another datacenter), and Worst Failure (worst possible failure based on design requirements for the environment).

Message Profile and Multiplier

By now you're probably saying "this is nice, but how do I know my message profile and multiplier numbers?" Great question. The message profile numbers on a live production deployment can actually be determined by yet another great script from Dan Sheehan called Generate-MessageProfiles.ps1, available on TechNet Gallery. This script will parse your transport logs and give you an actual number of messages sent/received per day. In addition to publishing the script, Dan has written a blog post that explains the script and its usage in detail.

That works for message profiles. What about the multiplier? This is the tough one. Some 3rd party vendors will actually give you a suggested multiplier for their software. Sometimes this information is not available. In this case you can use the previously referenced Exchange 2013 CPU Sizing Checker script to reverse engineer the multiplier. Let's say you run the script with a multiplier of 1.0. It gives you a CPU number of 50% which is the average CPU usage you can expect from the Exchange specific processes during the busiest hours of the day. You, however, are seeing a value closer to 65%. You can run the script again, modifying the multiplier, until you get a result close to 65%. Once you do, that can give you an idea of what multiplier number you should be using in your sizing plans.

As previously mentioned, version 7.2 of the sizing calculator has the ability to predict CPU values based on your planned deployment numbers. This means that you can modify the “Megacycles Multiplication Factor” in the profile settings on the calculator’s Input tab and view the results in the “CPU Utilization/Dag” section on the Role Requirements tab to get an idea of which multiplier value suits your deployment best. In most cases this is preferable to using the script as the calculator is faster and designed around helping you plan your deployment (as opposed to the script which is more for troubleshooting).

Oversizing

Contrary to what you may think, it is possible to oversize your servers from a CPU standpoint. This doesn't come down to raw processing power. It might be inefficient use of hardware in some cases to deploy on servers with high core counts, but too much processing power isn't the problem. When I talk about oversizing I'm not really talking about the available megacycles more than I am the number of cores. Exchange 2013 was developed to run on commodity type servers. Testing is generally done on servers with processor specifications of 2 sockets and about 16-20 cores. This means that if you deploy on servers with a much larger core count you may run into scalability issues. Core count is used to determine settings at the application level that can make a difference in performance. For example, in processes that use Server mode Garbage Collection we will create one managed heap per core (you can read in detail about Garbage Collection in .NET 4.5 here). This can significantly increase the memory footprint of the process and it goes up the more cores you have. We also use core count to determine the minimum number of threads in the threadpool of many of our processes. The default is 9 per core. If you have a 32 core server, that's 288 threads. If, for example, there is a sudden burst of activity you could have a lot of threads trying to do work concurrently. Some of the locking mechanisms for thread safety in Exchange 2013 were not designed to work as efficiently in high core count scenarios as they do in the recommended core count range. This means that under certain conditions, having too many cores can actually lead to a high CPU condition. Hyper-Threading can also have an effect here since a 16 core Hyper-Threaded server will appear to Exchange as having 32 cores. This is one of the multiple reasons why we recommend leaving Hyper-Threading disabled. These are just a few examples but they show that staying within the recommendations made by the product group when it comes to server sizing is extremely important. Scaling out rather than up is better from a cost standpoint, a high availability standpoint, and from a product design standpoint.

Version 7.2 of the Sizing Calculator also allows us to get an idea of the expected CPU utilization. The difference is it will calculate expected CPU utilization based on the number of active and passive mailboxes planned by taking the values from the Input page of the spreadsheet (as opposed to querying the mailbox server for a current total). The new features in version 7.2 provide insight that lets you know what to expect from a CPU utilization standpoint in multiple different scenarios that include Normal Runtime (no failures, evenly distributed databases), Single Failure (a single server in the datacenter has failed, resulting in database copy activation), Double Failure (two servers in the datacenter have failed, resulting in database copy activation), Site Failure (a datacenter has failed, requiring failover to another datacenter), and Worst Failure (worst possible failure based on design requirements for the environment).

Message Profile and Multiplier

By now you're probably saying "this is nice, but how do I know my message profile and multiplier numbers?" Great question. The message profile numbers on a live production deployment can actually be determined by yet another great script from Dan Sheehan called Generate-MessageProfiles.ps1, available on TechNet Gallery. This script will parse your transport logs and give you an actual number of messages sent/received per day. In addition to publishing the script, Dan has written a blog post that explains the script and its usage in detail.

That works for message profiles. What about the multiplier? This is the tough one. Some 3rd party vendors will actually give you a suggested multiplier for their software. Sometimes this information is not available. In this case you can use the previously referenced Exchange 2013 CPU Sizing Checker script to reverse engineer the multiplier. Let's say you run the script with a multiplier of 1.0. It gives you a CPU number of 50% which is the average CPU usage you can expect from the Exchange specific processes during the busiest hours of the day. You, however, are seeing a value closer to 65%. You can run the script again, modifying the multiplier, until you get a result close to 65%. Once you do, that can give you an idea of what multiplier number you should be using in your sizing plans.

As previously mentioned, version 7.2 of the sizing calculator has the ability to predict CPU values based on your planned deployment numbers. This means that you can modify the “Megacycles Multiplication Factor” in the profile settings on the calculator’s Input tab and view the results in the “CPU Utilization/Dag” section on the Role Requirements tab to get an idea of which multiplier value suits your deployment best. In most cases this is preferable to using the script as the calculator is faster and designed around helping you plan your deployment (as opposed to the script which is more for troubleshooting).

Oversizing

Contrary to what you may think, it is possible to oversize your servers from a CPU standpoint. This doesn't come down to raw processing power. It might be inefficient use of hardware in some cases to deploy on servers with high core counts, but too much processing power isn't the problem. When I talk about oversizing I'm not really talking about the available megacycles more than I am the number of cores. Exchange 2013 was developed to run on commodity type servers. Testing is generally done on servers with processor specifications of 2 sockets and about 16-20 cores. This means that if you deploy on servers with a much larger core count you may run into scalability issues. Core count is used to determine settings at the application level that can make a difference in performance. For example, in processes that use Server mode Garbage Collection we will create one managed heap per core (you can read in detail about Garbage Collection in .NET 4.5 here). This can significantly increase the memory footprint of the process and it goes up the more cores you have. We also use core count to determine the minimum number of threads in the threadpool of many of our processes. The default is 9 per core. If you have a 32 core server, that's 288 threads. If, for example, there is a sudden burst of activity you could have a lot of threads trying to do work concurrently. Some of the locking mechanisms for thread safety in Exchange 2013 were not designed to work as efficiently in high core count scenarios as they do in the recommended core count range. This means that under certain conditions, having too many cores can actually lead to a high CPU condition. Hyper-Threading can also have an effect here since a 16 core Hyper-Threaded server will appear to Exchange as having 32 cores. This is one of the multiple reasons why we recommend leaving Hyper-Threading disabled. These are just a few examples but they show that staying within the recommendations made by the product group when it comes to server sizing is extremely important. Scaling out rather than up is better from a cost standpoint, a high availability standpoint, and from a product design standpoint.

Single Process Causing High CPU

Generally if you have a CPU throttling issue or are undersized, you will see high CPU that will not seem to be caused by a single process. Rather, the server just looks "busy". The CPU utilization is high, but no single process appears to be the cause. There are times though where a single process can be causing the CPU to go high. In this section we will go over some tricks with performance monitor to narrow down the offending process and dig a bit into why it may be happening. Perfmon Logs Perfmon is great, but what if you were not capturing perfmon data when the problem happened? Luckily Exchange 2013 includes the ability to capture daily performance data and this feature is turned on by default. The logs are usually located in Exchange Server installation folder under “V15\Logging\Diagnostics\DailyPerformanceLogs”. These are binary log (*.blg) files that are readable by perfmon.exe. To review one just launch perfmon, go to Monitoring Tools\Performance Monitor, click the “View Log Data” button, and under Data Source select “Log Files”, click add, and browse to the file you wish to view. The built in log capturing feature has to balance between gathering useful data and not taking up too much disk space so it does not capture every single counter and it only captures on a one minute interval. In most cases this is enough to get started. If you find you need a more robust counter set or a shorter sample interval you can use ExPerfWiz to setup a more custom capture. A tip here: if you want to collect this information regularly and from multiple servers, check out this blog post. Perfmon Analysis The very first counter I load when analyzing a perfmon log for a high CPU issue is "Processor(_Total)\% Processor Time". It gives you an idea of the total CPU utilization for the server. This is important because first and foremost, you need to make sure the capture contains the high CPU condition. With this counter a CPU utilization increase should be easy to spot. If it was a brief burst you can then zoom into the time that it happened to get a closer look at what else was going on at the time. I'll note the difference between Process and Processor. Processor is based on a scale of 0-100 (CPU usage in overall percentage) and can break down values by individual core. Process uses a scale based on the core count of the server and can break down values by individual process. If you have a 16 core server, a 100% CPU spike would have a value of 1600 when using the Process counter. Process(_Total) can be misleading as it adds up the processor time of all processes including the Idle process, which means it will almost always be near the maximum value. If you are looking at a perfmon capture and don't know the total number of cores, just look at the highest number in the instances window under the Processor counter. It is a zero based collection, each number representing a core. If 23 is the highest number, you have 24 cores. Now that you know that there was a high CPU condition and when it occurred, we can start narrowing down what caused it. The next thing to do is load all instances under "Process\% Processor Time". You can ignore "_Total" and "Idle". During this phase of troubleshooting it may be best to change the vertical scale of the perfmon window. To do this right click in the window, properties, graph tab, change the maximum to core count x 100. In our 16 core example you would change it to 1600. Look for any specific process that takes up more CPU than the others and goes up in tandem with the overall CPU utilization. If there isn't one in particular, you don't have a single process causing the issue. This tends to point to some of topics covered in the previous sections such as sizing, load, and CPU throttling. Mapping w3wp instances to application pools Let's say you do find one particular process that is causing the high CPU condition. Suppose that the process has the name "w3wp#1". What exactly are you supposed to do with that? Exchange runs multiple application pools in IIS for the various protocols it supports. We need to find out which application pool "w3wp#1" maps to. Luckily perfmon has the information we need, you just need to know how to find it. The first thing you want to do is load the counter "Process(w3wp#1)\ID Process". This will give you the process ID (PID) of that w3wp instance. Let's say it's 22480. With that information we go back to the counter load screen and look under "W3SVC_W3WP". Click on any of the counters. Below you will see a window that contains entries with the format PID_AppPool. In our example it says 22480_MSExchangeSyncAppPool. That tells us that w3wp#1 belongs to the Exchange ActiveSync application pool. Now we know that ActiveSync is the cause of our high CPU. At this point you can remove all of the counters from your view except for "Process(w3wp#1)\% Processor Time" as the extra clutter is no longer needed. You may also want to set the vertical scale back to 100 and right click on the counter and choose "Scale Selected Counters". I should also note here that due to managed availability health checks, sometimes an application pool is restarted. When this happens the PID and the w3wp instance may change. Pay attention to the “Process(w3wp*)\ID Process” counter for the worker process you are interested in. If this value changes that means the process was recycled, the PID changed, and perhaps the w3wp instance as well. You will need to verify if the instance changed after the process recycled to make sure you are still looking at the right information. What is the process doing? Now that we've narrowed it down to w3wp#1 and know that ActiveSync is the cause of our issue, we can start to dig into troubleshooting it specifically. These methods can be used on multiple other application pools but this example will be specific to ActiveSync. The most common thing to look for is burst in activity. We can load up the counter "MSExchangeActiveSync\Requests /sec" to see if there was an increase in requests around the time of the problem. Whether there was or was not, we now know if increased request traffic led to the CPU increase. If it did, we need to find the cause of the traffic. It's a good idea to check the counter "MSExchange IS Mailbox(_Total)\Messages Delivered /sec". If this ticks up right before the CPU increase, it tells you that there was a burst of incoming messages that likely triggered it. You can then review the transport logs for clues. If it wasn't message delivery it may have been some mobile device activity that caused it. In this case you can use Log Parser Studio to analyze the IIS logs for trends in ActiveSync traffic. Garbage Collection (GC) If there was no noticeable increase in request traffic or message delivery before the increase, there may be something inside the process causing it. Garbage collection is a common trigger. You can look at ".NET CLR Memory(w3wp#1)\% Time in Garbage Collection". If it sustains higher than 10% during the issue it could trigger high CPU. If this is the case also look at ".NET CLR Memory(w3wp#1)\Allocated Bytes /sec". If this counter sustains about 50,000,000 during the high CPU condition and is coupled with an increase in "% Time in Garbage Collection", it means the Garbage Collector may not be able to keep up with the load being placed on it. I want to note very clearly here that if you encounter this, Garbage Collection throughput usually isn't the root of the problem. It is another symptom. Increases of this type usually indicate abnormal load is being placed on the system. It is much better to find the root cause of this and eliminate it rather than to start changing the garbage collector settings to compensate. RPC Operations/sec This is perhaps the best counter we have in mapping client activity to high CPU. You can load up "MSExchangeIS Client Type(*)\RPC Operations /sec" to get an idea of how many RPC requests are being issued against the Information Store by client type. Usually the highest offenders will be momt (Requests from the RPC Client Access Service, usually Outlook MAPI clients), contentindexing, webservices (EWS), and transport (mail delivery). You really need to have a baseline of your environment to know what "normal" is but you can definitely use this counter to compare to the overall CPU utilization to see if client requests are causing a CPU utilization increase. Log Parser Studio (LPS) If I were stuck on a desert island and had to troubleshoot Exchange performance issues for food, and could only bring two tools, they would be perfmon and Log Parser Studio. LPS contains several built in queries to help you easily analyze traffic for the various protocols used by Exchange. You can use it to get a view of the most ActiveSync hits per day by device, EWS requests by client type, RPC Client Access MAPI client version by percentage, and many others. The built in queries are great for just about anything you'd need to find out. If you need more and know a bit of TSQL, you can even write your own. LPS is covered in depth in Kary Wall's blog post. If you get to the point where you have the client type causing your issue narrowed down, LPS is usually the next step.Conclusion

Performance is a vast topic and I don't expect this blog post will make you an expert immediately, but hopefully it has given you enough tips and tricks to start tracking down Exchange 2013 high CPU issues on your own. If there are other topics you would like to see us blog about in the realm of Exchange performance please leave feedback below. Happy troubleshooting! Marc NivensUpdated Jul 01, 2019

Version 2.0The_Exchange_Team

Platinum Contributor

Joined April 19, 2019

Exchange Team Blog

You Had Me at EHLO.