- Home

- Azure Data

- Azure Data Explorer Blog

- Optimizing Vector Similarity Search on Azure Data Explorer – Performance Update

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

This post is co-authored by @Anshul_Sharma (Senior Program Manager, Microsoft).

This blog is an update of Optimizing Vector Similarity Searches at Scale. We continue to improve the performance of vector similarity search in Azure Data Explorer (Kusto). We present the new functions and policies to maximize performance and the resulting search times.

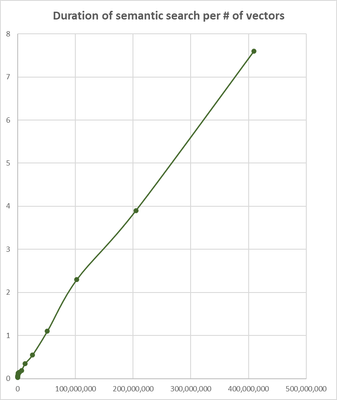

The following table and chart present the search time for the top 3 most similar vectors to a supplied vector:

|

# of vectors |

Total time |

|

25,000 |

0.03 |

|

50,000 |

0.035 |

|

100,000 |

0.047 |

|

200,000 |

0.062 |

|

400,000 |

0.094 |

|

800,000 |

0.125 |

|

1,600,000 |

0.14 |

|

3,200,000 |

0.15 |

|

6,400,000 |

0.19 |

|

12,800,000 |

0.35 |

|

25,600,000 |

0.55 |

|

51,200,000 |

1.1 |

|

102,400,000 |

2.3 |

|

204,800,000 |

3.9 |

|

409,600,000 |

7.6 |

This benchmark was done on a medium size Kusto cluster (containing 29 nodes), searching for the most similar vectors in a table of Azure OpenAI embedding vectors. Each vector was generated using ‘text-embedding-ada-002’ embedding model and contains 1536 coefficients.

These are the steps to achieve the best performance of similarity search:

- Use series_cosine_similarity(), the new optimized native function to calculate cosine similarity

- Set the encoding of the embeddings column to Vector16, the new 16 bit encoding of the vectors coefficients (instead of the default 64 bit)

- Store the embedding vectors table on all nodes with at least one shard per processor. This can be achieved by limiting the number of embedding vectors per shard by altering ShardEngineMaxRowCount of the sharding policy and RowCountUpperBoundForMerge of the merging policy.

Suppose our table contains 1M vectors and our Kusto cluster has 20 nodes each has 16 processors. The table’s shards should contain at most 1000000/(20*16)=3125 rows. These are the KQL commands to create the empty table and set the required policies and encoding:

- .create table embedding_vectors(vector_id:long, vector:dynamic) // more columns can be added

- .alter-merge table embedding_vectors policy sharding '{ "ShardEngineMaxRowCount" : 3125 }'

- .alter-merge table embedding_vectors policy merge '{ "RowCountUpperBoundForMerge" : 3125 }'

- .alter column embedding_vectors.vector policy encoding type = 'Vector16'

Now we can ingest the vectors into the table.

And here is a typical search query:

let searched_vector = repeat(1536, 0); // to be replaced with real embedding vector.

embedding_vectors

| extend similarity = series_cosine_similarity_fl(vector, searched_vector, 1, 1)

| top 10 by similarity desc

The current semantic search times enable usage of ADX as embedding vectors storage platform for RAG (Retrieval Augmented Generation) scenarios and beyond,

We continue to improve vector search performance, stay tuned!

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.