- Home

- Microsoft Mechanics

- Microsoft Mechanics Blog

- Microsoft Purview Insider Risk Management | Admin Set-up Tutorial

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

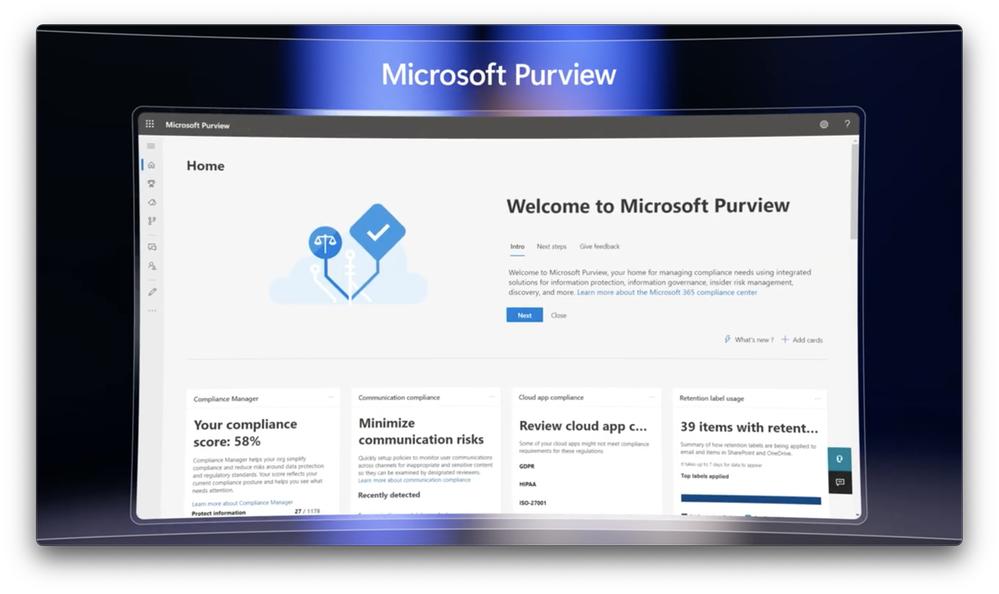

With remote work and a changing data landscape, risk of data theft has reached new heights - Insider Risk Management helps protect against those risks. Watch the step-by-step tutorial for implementing an Insider Risk Management solution for your organization as part of Microsoft Purview.

It's surprisingly simple to build a baseline for managing activity inside your organization - from getting everything running, to setting policy on the types of violations that should raise system alerts, to assigning permissions for those who should have oversight and a level of incident detail they can see. While more advanced settings like intelligent detections define un-allowed and allowed domains and risk score boosters detect unusual activities or previous policy violations.

Talhah Mir, from the Microsoft Purview team, joins Jeremy Chapman to show you how to set up Insider Risk Management with Microsoft Purview.

It's easy to get started.

Insider Risk Management solutions with actionable insights and policies customized to your organization's needs. See how to implement Purview for the first time.

What’s your appetite for insider risk?

Analyze, customize, and quantify the level of data and communication risk from employees inside your organization with Microsoft Purview. See how to set permissions, customize and create policies, and specify content priority.

Leverage Purview's little-known advanced settings.

Watch our video here.

► QUICK LINKS:

00:00 Introduction

00:57 The best way to get started

01:18 Customize core experiences and insights

02:36 How to implement Purview for the first time

04:00 Set permissions

04:52 Customization

07:24 Create policies

08:00 Specify content priority

09:28 Define how a policy gets triggered

11:53 Two categories of detection

13:02 How to sign up for CS management in Microsoft Purview

► Link References:

Set up a trial of Microsoft Purview: https://aka.ms/purviewtrialReceive

Receive guidance for connecting HR systems: http://aka.ms/HRConnector

► Unfamiliar with Microsoft Mechanics?

• As the Microsoft’s official video series for IT, you can watch and share valuable content and demos of current and upcoming tech from the people who build it at Microsoft. Subscribe to our YouTube: https://www.youtube.com/c/MicrosoftMechanicsSeries?sub_confirmation=1

• Talk with other IT Pros, join us on the Microsoft Tech Community: https://techcommunity.microsoft.com/t5/microsoft-mechanics-blog/bg-p/MicrosoftMechanicsBlog • Watch or listen from anywhere, subscribe to our podcast: https://microsoftmechanics.libsyn.com/website

• To get the newest tech for IT in your inbox, subscribe to our newsletter: https://www.getrevue.co/profile/msftmechanics

► Keep getting this insider knowledge, join us on social:

• Follow us on Twitter: https://twitter.com/MSFTMechanics

• Share knowledge on LinkedIn: https://www.linkedin.com/company/microsoft-mechanics/

• Enjoy us on Instagram: https://www.instagram.com/microsoftmechanics/

• Loosen up with us on TikTok: https://www.tiktok.com/@msftmechanics

Video Transcript:

- Up next, we look at how you can implement the privacy-enabled insider risk management solution for your organization. From getting everything running, to setting policy on the types of violations that should raise system alerts, assigning permissions for those who should have oversight, and the level of incident detail that they see and more. And I'm joined again by Talhah Mir from the Microsoft Purview team, for round two now in insider risk management. Welcome back to the show.

- Thank you, Jeremy, it's great to be back.

- So Talhah, last time you were on Mechanics, we gave an overview on the insider risk management solutions that are part of Microsoft Purview. Now, if you missed that show, you can check it out on our playlist at aka.ms/InsiderRiskMechanics. Today we want to go deeper on how you can implement the insider risk management solution as one of two solutions that are featured in the insider risk category. So, what's the best way to get started?

- The important thing about insider risk is that you have to align it to your risk appetite, everything from laws and regulations to your organization's culture. And the great thing about this solution is that you can configure it to your specific needs.

- That said, though, before we get into the implementation guidance, why don't we remind everyone about the core experiences and insights that you can customize here?

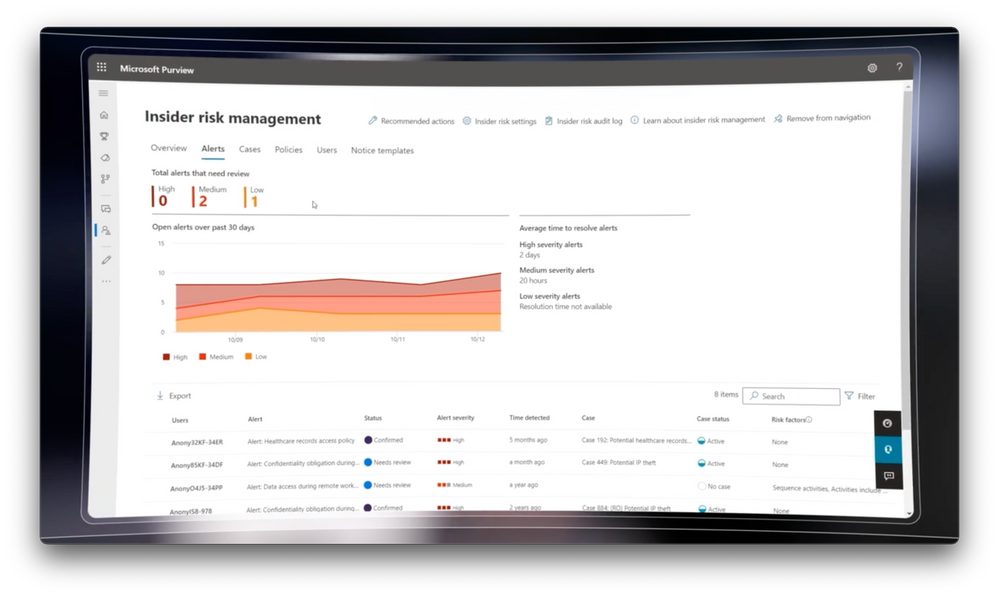

- Sure, so the last time I was here, I showed you a few core experiences which I'll be showing you how to implement today. The first was analytics, which gives you an aggregate view of anonymized user activities to help you quantify the level of risk inside of your organization and see data exfiltration patterns. Next are the alerts on incidents, which as you mentioned, are core to insider risk management. These are based on the rules you set. Importantly, we're able to combine rules and machine learning to sequence and stitch together the series of activities, around an incident for full context on what is happening, which helps you to capture intent, as well as tie it to a pivotal event, like a resignation. And we also give you statistical system level insights that look at cumulative activities. Our aggregate detector, which is an assessment in aggregate of the level of risk under the cumulative exfiltration activities card, exposes activities over time that accumulate risk. Central to everything is privacy, with features like pseudonymization and role-based access control. And we have escalation workflows as part of the service, to bring the right teams together to take action.

- And I really think a lot of people watching are probably going to be surprised by the simplicity of really getting the service configured, you know, for your needs. So why don't we start with how you'd implement the solution then for the first time?

- Sure, so there's a couple of things here. First, if you don't have access to the solution with a subscription that includes it, let me show you how. You can get trial running by going to aka.ms/purviewtrial. Next, you'll start out on the Microsoft Purview insider risk management solution overview page. Here, we list out all the steps needed to get everything running and create your first policy. You'll also need to make sure that auditing is enabled for your tenant. This is normally on by default, but to be sure it's enabled in your tenant, from the left navigation, go to audit. And if you see this dialogue to start recording, that means that it's not enabled and you can go ahead and enable it from here. Next, an option we recommend is to turn on analytics. This helps you to decide and prioritize the types of policies you want to put in place. All you need to do is go to settings, then analytics, then toggle the control to on to enable it. This will generate insights for your tenant, which can take up to 48 hours. But if we fast forward in time, you'll see that we now started getting insights in our tenant. In fact, something we just announced is ability to set up a default policy from here with just a few clicks. These default policies in the system will use common settings to help you get started even faster.

- And so now you can start to build a baseline, really, for insider risk activities in your organization. So how do you then go about setting up permissions?

- So this is also featured in our recommended actions. As an admin on Microsoft Purview, you'll have to sign the right people the right roles to use insider risk management. To do that, you'll go to permissions on the left navigation again, select Microsoft Purview solutions, roles. You can narrow the list down by entering insider risk management in the search filter. And you'll see quite a few different role types. When you get started, you'll want to add yourself to the global insider risk management role group. In my case, I'm already a member, but if I wasn't, I'd just need to hit edit. And later when you assign other people roles, you can use these options to give everyone the correct level of access based on what they should be able permitted to see. And even reduce your own access to perhaps just an admin.

- Okay, so now you've got the solution running, you've kind of established your initial set of permissions. Now you can move on to the customization.

- So the great thing with this is, for your Windows users, there's no agents to deploy or proxies to set up. And we have agents for your users with Macs. As I mentioned, insider risk management has privacy built in with pseudonymization to mask user names and this is on by default. Insider risk is all about indicators and within policy indicators, you can choose the indicators that are relevant for your organization. And because of our privacy first design, these are opt-in only, so you can determine exactly what activities are detected. There are a lot of options, as you can see here, from Office, Exchange, SharePoint, OneDrive and Teams to name a few, and you can check the boxes you want. In my case to save time, I'll hit "select all" for this category. Below Office, you'll find the device indicator category. Again, there are no agents to deploy for Windows and we support Mac OS with an agent. If you see this section is grayed out, it means your devices aren't onboarded yet. I'll just select these three in this case for some of the most relevant activity types for me. We're also integrated with Microsoft Defender for Endpoint and Microsoft Defender for Cloud Apps. With Defender for Cloud Apps, specifically, the solution extends its visibility into your multi-cloud environment such as AWS, GCP, Salesforce, and more. And these indicators are specific to the healthcare industry that extend into the electronic medical records or EMR systems like Epic. And finally, the risk score boosters, including the anomaly models, which detect if the activity's above the user's usual activity for that day, or if that user has had previous policy violations. The great thing about setting these indicators here and early on, is that they'll become your defaults later on when you start to build out your policies. You'll see what I mean, once we get to policy creation. Once you're ready, you just need to save your changes and the defaults are all set for your policies. And there are a lot more advanced settings here you could set, but one thing to point out here in intelligent detections is that you can define unallowed and allowed domains that are the source or destination of the files that people move.

- I've got to say, this seems a lot easier to set up than other UEBA solutions out there, with everything that just built in. So, now that you've configured kind of what to look for, what's next?

- So now it's time to create your first policy. Policies are where conditions come together with the indicators we just defined to help you detect and investigate potential risk. I'll jump into policies and create my first one. We make it super easy using policy templates. Here, we can see top categories for data theft, data leaks, security policy violations and health record misuse. For my first policy category, I'll choose data leaks. We have templates for general data leaks, leaks from priority users and leaks from disgruntled employees. I'll keep general selected. Give it a name. Here, I can scope this to users. At first, you'll probably want to start small and grow the list of users or groups over time. So I'll just choose a couple of users here, Adele and Allen. Next, I can choose which content I want to prioritize, because not all content is created equal. So I can choose to pick certain SharePoint sites, content with sensitivity label or sensitive information or even certain file extensions or decide to treat all content equally. In my case, I'll specify the content priority. Next, it'll ask which SharePoint sites I want to prioritize. For example, you may have sites dedicated to a secret project, sales team sites with customer information, or research and development design sites. Then you can choose your sensitive information types. For example, if you're already using data loss prevention, this will look for the same shared set of options. You can see things like bank routing numbers, ID cards, passports, credit cards, and more. Next, you can use sensitivity labels that have already been applied using Microsoft Purview information protection. And the last category for prioritization is based on file extensions. This is great for things like 3D design files, like OBJ and STL. Or for example, if your contracts are standardized as PDFs, then the location along with the file type can be very effective.

- And that's a great point. So now you've got all the rules set up to look for and prioritize the content that you really care about.

- We have. And now the next step is to define how your policy gets triggered. And this allows you to define the precise conditions, through which a user that is in scope for a policy gets evaluated for risk. First, I can choose to trigger based on a DLP policy match and what it has defined for rules and thresholds. For this general data leak policy, alternatively, I can also choose to base the trigger on when a user exfiltrates data in a way that poses higher risk. Then since many of these activities are normal in a daily course of business, I can set thresholds for the count of these activities that might be suspicious. I can use the defaults or I can customize those to my needs. You can see these numbers are pretty high to begin with to help you manage noise. There are also options to customize various dimensions of an activity type, let's take using browser to upload files to a web. Not only could I trigger on a fixed number of files being uploaded to any website, I can get specific and instead consider only files if they contain sensitive information or files that match my priority content conditions or files that are uploaded to a domain I defined as being unallowed. Lastly, also consider the fact that people tend to work differently based on their roles, sometimes even within the same group or department. So this last option uses machine learning to gauge activity that is above the user's usual activity in a day. And by the way, to add triggers based on an HR event, like the employee resignation we showed, you can follow the guidance for connecting your HR system at aka.ms/HRConnector.

- Okay, but back to the topic of thresholds. So how would we think about, you know, defining these types of thresholds, especially in the cases where, we might be just getting started?

- The configurations you set will depend on your organizational risk appetite. Think of this like a funnel. You'll have one set of thresholds to suit everyone in your organization and you'll layer tighter thresholds for higher risk groups for different policies. Let me show you. As I walk through this next section on indicators, I'll explain the different detection categories. I'm now in policy indicators. You'll remember these from before, in the insider risk management settings. And you can see that everything I chose before is preselected here. And this is where you can make adjustments for your policy to remove indicators from the list. Notice the indicators we didn't choose before are disabled. So you can't accidentally re-add them here. Instead, you'd have to go back to settings to enable them as options. This is a safety and privacy control to avoid including the wrong signals or indicators for your policies. Now, broadly speaking, we have two categories of detections in the system, rule-based and statistical. Rule-based detections are for single activities with varying thresholds. And we have intelligent sequence detection that looks for chained events that commonly show intent. Below that, we see statistical detectors to look for statistical deviations from your organizational norms, for aggregate activities over the last 30 days. And then here with boosters, this time we're looking at unusual activity for an individual user for this specific policy. Then once you have confirmed the indicators you want, one final option is to define the thresholds for your selected indicators, or you can just keep the defaults. And once you've finished there, you'll see a confirmation summary screen with everything you've configured. And by hitting submit, that will activate the policy. And it can take around 24 hours for your policy matches to show up. Okay, so now your policy is configured and by the way, just like any other policy, you'll monitor it to ensure that there isn't excessive noise or conversely that you're not picking up enough signal, then tune your settings as needed to hit the right balance. So Talhah, for anyone who's watching and wanting to get this set up, what do you recommend?

- Sure, if you're using Microsoft 365 E3 and want to try out insider risk management, go to aka.ms/PurviewTrial. And to learn more about insider risk management, check out aka.ms/InsiderRiskDocs, or go to our Ninja page at aka.ms/InsiderRiskNinja.

- Thanks so much Talhah for joining us today and sharing the details for getting insider risk management set up, you know, next time on our series that you can find at aka.ms/InsiderRiskMechanics, we're going to go deeper on setting up communication compliance to help manage communication risk. So keep checking back to Microsoft Mechanics for all the latest updates. Hit subscribe to our channel, if you haven't already and as always, thank you for watching.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.