- Home

- Security, Compliance, and Identity

- Microsoft Defender for Identity

- ATP sensor Consume most server CPU (60%)

ATP sensor Consume most server CPU (60%)

- Subscribe to RSS Feed

- Mark Discussion as New

- Mark Discussion as Read

- Pin this Discussion for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dec 23 2018 06:15 AM

It is observed after installing ATP sensor, on domain controller, that more than 60 % of 16 cores CPU are consumed by microsoft.tri.sensor.exe component

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dec 23 2018 06:53 AM

The sensor (if installed on a DC) will make sure at least 15% of RAM and CPU are free at all time.

other wise it will try to utilize any free resources to reduce data latency.

if the machine will get more busy, the limits will be adjusted and it will utilize less resources.

(It will auto adjust within ~ 10 sec)

Plus, if it's a new deployment, and this instance is a synchronizer candidate, it's expected to work harder during the first few hours until the initial AD sync completes.

For a standalone sensor, it assumes it can use all the machine resources without limits.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dec 31 2018 01:08 AM

What Eli described is documented here, under the "Resource limitations" bullet item.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jan 08 2019 05:34 AM

Dear All,

Thanks for your support, it is observed after updating the current version to 2.60.6070.18946 the issue of CPU has been resolved.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 03 2021 12:52 AM

@Eli Ofek We are facing very high CPU usage with one of our DCs. The sensor process seems to occupy most of the resources on this DC. COuld you please share some insight on remediating this? Also, we are seeing too many 8.8.8.8 connections on this server, not sure if this is linked! Any leads would be appreciated as always!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 03 2021 01:28 AM

@mesaqee , it seems that the total CPU on the machine is 74%, so technically speaking there is no issue, and the sensor is not even throttling at this point.

Such consumption might be expected for high traffic scenarios.

What did the sizing tool had to say about this machine?

What is the hardware spec ? what is the busy packets/sec and max packets/sec ?

the sensor itself won't initiate connections specifically to 8.8.8.8, but if you are running a DNS service on the machine that will except connections from 8.8.8.8, then it is expected that the sensor will try to get back to this endpoint to try and resolve it. most likely it's not related to the CPU usage.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 03 2021 02:07 AM

Dear @Eli Ofek ,

The server is based on a VM, attached are the complete hardware specs. The busy packets/sec=511 and max packets/sec=32,295.

Please see below the complete sizing tool output:

| DC | Sensor Supported | Failed Samples | Max Packets/sec | Avg Packets/sec | Busy Packets/sec | Busy Packets/sec Start Time | Busy Packets/sec End Time | Min Avail MB | Avg Avail MB | Busy Avail MB | Busy RAM Start Time | Busy RAM End Time | Total MB | Max % CPU Time | Avg % CPU Time | Busy % CPU Time | Busy CPU Start Time | Busy CPU End Time | Logical processors | Processor Groups | Core Count | VM Indicator | AD Site | Time Zone Name | Is DST | OS Caption | OS Build Number | OS Installation Type | OS Server Levels |

| XXXXX | Yes, but additional resources required: +1GB; +1 core | 8 | 32,295 | 105 | 511 | 19:51:52 | 20:21:50 | 2,366 | 4,349 | 3,323 | 17:12:16 | 17:42:14 | 8,191 | 100 | 50 | 98 | 2:17:12 | 2:47:30 | 2 | 1 | 2 | VMWare | XXXXX | (UTC+08:00) Beijing, Chongqing, Hong Kong, Urumqi | Microsoft Windows Server 2019 Standard | 17763 | Server | ServerCore; ServerCoreExtended; Server-Gui-Mgmt; Server-Gui-Shell |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 03 2021 06:58 AM

While the busy packets are low, the max is pretty high...

Is the high CPU you noticed is constant or spikes on certain hours ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 03 2021 08:24 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 04 2021 03:08 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 23 2021 02:59 AM

Dear@Eli Ofek ,

The issue is still there even after increasing the server capacity.

We re-ran the sizing tool, and here are the results:

| DC | Sensor Supported | Failed Samples | Max Packets/sec | Avg Packets/sec | Busy Packets/sec | Busy Packets/sec Start Time | Busy Packets/sec End Time | Min Avail MB | Avg Avail MB | Busy Avail MB | Busy RAM Start Time | Busy RAM End Time | Total MB | Max % CPU Time | Avg % CPU Time | Busy % CPU Time | Busy CPU Start Time | Busy CPU End Time | Logical processors | Processor Groups | Core Count | VM Indicator | AD Site | Time Zone Name | Is DST | OS Caption | OS Build Number | OS Installation Type | OS Server Levels |

| XXXXX.local | Yes | 0 | 2,264 | 139 | 181 | 09:51:47 | 10:06:45 | 4,623 | 5,193 | 4,725 | 04:03:52 | 04:18:49 | 10,239 | 100 | 11 | 69 | 12:58:17 | 13:13:20 | 4 | 1 | 4 | VMWare | HXXXX | (UTC+08:00) Beijing, Chongqing, Hong Kong, Urumqi | Microsoft Windows Server 2019 Standard | 17763 | Server | Remote Registry Query Failed |

Appreciate if you can provide some more insights around this.

Thanks,

Saqib

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 24 2021 01:48 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 24 2021 02:39 AM

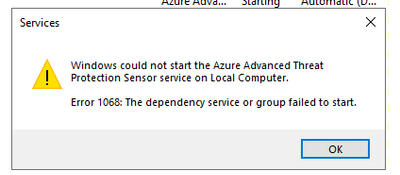

@Eli Ofek Well after upgrading the capacity the usage was pretty high and we were even getting the "Some network traffic could not be analyzed" alert. However, we are now unable to see the trend as the Sensor service is not starting. On trying to manually start it, we are getting the below error:

We have tried rebooting the server and re-installed the sensor, but the service is still not running. Shall we contact support to have a detailed look or you got any further suggestions?

Thanks,

Saqib

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 24 2021 02:41 AM

Also make sure you have the WmiApSrv service starting correctly.

If no clues, you need a support ticket to go forward...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Feb 26 2024 07:47 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Feb 26 2024 08:01 AM

@afar Your description is too generic to spot the root cause.

This is something that would best be handled via a support ticket, as given the machine details we can track it's telemetry remotely to better understand what is happening and why.

General note: assuming this is not a standalone sensor you are talking about (in which case it's normal for it to consume all available resource), the sensor is designed to throttle it's cpu and memory consumption to make sure the server has at least 15% free cpu/memory at all times.

So while high cpu can be "by design" if traffic on the machine is high, exhaustion should not really happen unless something really bad happen and the resource manager fail to do it's job, which is unlikely.