- Subscribe to RSS Feed

- Mark Discussion as New

- Mark Discussion as Read

- Pin this Discussion for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 07 2021 03:14 AM

I'm working on a project. I need to track the patient movements within 14 days if he/she went to same service within 14 days so it will appear with different color as the sheet contains around 16k member which is too hard to track.

- Labels:

-

Excel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 07 2021 11:18 AM

@MarinaSaadallaCould you share a sample of your data, without disclosing any private information? Would be helpful to see what you are working with, in order to provide a solution. A few hundred rows of data might be sufficient. And if you could indicate what summary you would expect to see for these would be even better.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 05 2021 02:14 PM

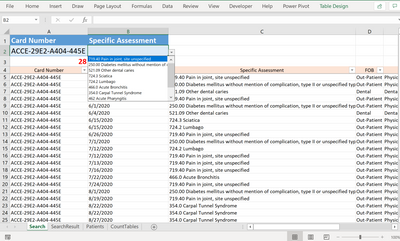

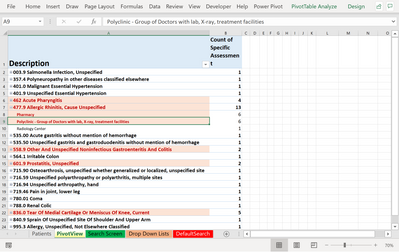

this is a sample from my project.

as you can see we have the id no. and the service that the patient used with the cost, the date and the provider for the service.

what I'm expecting: to find the abuser/misuser of the service by the no. of visits to the provider, dates are near to each other or going for the same diagnosis with high no. of frequency anything weird i can find will help

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 05 2021 09:39 PM

@MarinaSaadalla You send a nice file with over 80 thousand records. I cannot possibly oversee what kind of data you have in there. But perhaps a few tips to get started. You may want to split the P.O.ID (column Q) so that you have the PO number and the year. And then perhaps also split the Specific Assessment (column U) in Code and Description.

Then you could do a first summary (count and cost) by P.O. number (or whatever column identifies an individual), Assessment code and Date, using a pivot table. But the number of POs and Assessment codes is quite big, so perhaps you need start by counting the number of visit per PO per month. That could eliminate most of them. Then you focus on those POs that have many occurrences, as the others are probably "not weird". But that's for you to judge.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 06 2021 04:53 PM

@Riny_van_Eekelen thank for your fast response ... i made the sample more simple may it becomes more clear for you to help me.

thanks in advance

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 06 2021 09:35 PM

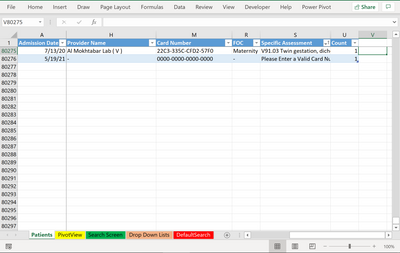

@MarinaSaadalla Thanks! I assume that the Card numbers represent the patients. I created a pivot table that counts the number of visits per card number, per assessment, per day. Showing only those that had more than 1 visit in total. That limits the list to 10 patients, one of which had 3 visits within two days. Up to you to judge the weirdness.

Now, in your real file with 68000 records, you could start in the same way, but vary the filtering limits to limit the output.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 07 2021 11:28 PM

I filtered it the old fashioned way much faster

I created 2 helper columns at the right most side to filter in:

1. any dates that are duplicates

2. by Assessment

Then you can spot check to see if anything interests further investigation or you can filter it by card to view the details of the multiple transactions on that same date. Let us know if that helps

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 09 2021 02:42 PM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 11 2021 05:06 PM

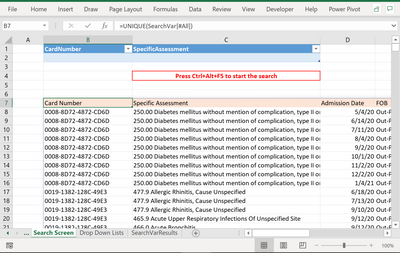

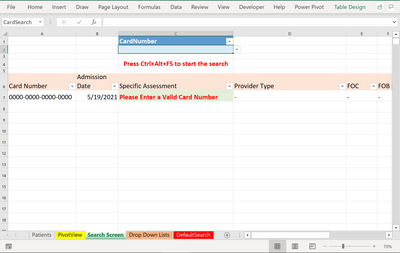

@MarinaSaadalla Here's a tool that might be helpful. You enter the Card Number and it will list all transactions related to the card:

Hope that helps you in your project.

Cheers

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 16 2021 03:01 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 16 2021 03:45 AM

@Riny_van_Eekelen I found highlighted card numbers in the data sheet, how did you do it ?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 16 2021 03:50 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 16 2021 04:10 AM

@MarinaSaadalla You mean the ones in col F on the Data sheet? I just applied conditional formatting to highlight duplicate card numbers. Can't really remember why I did that, though.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 16 2021 05:51 AM

@Riny_van_Eekelen Thank you .. really I appreciate your help.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 16 2021 05:52 AM

your effort is appreciated.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 16 2021 10:03 PM

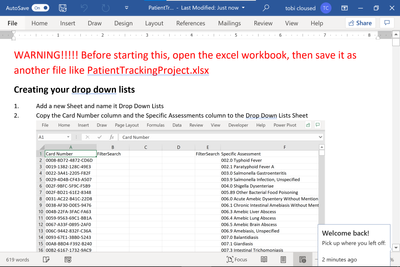

Here's the Instructions and the reference workbook that go with it:

Let me know if you have questions

Cheers

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 16 2021 10:06 PM - edited May 17 2021 01:15 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

May 19 2021 04:54 PM

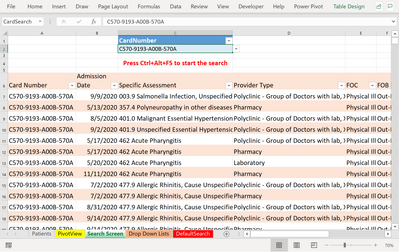

I removed the Specific Assessments search box since it seems to make excel crash when left empty, and when both drop downs are empty excel will just stop responding

I was looking at the tool and made some improvements on it for example:

If you have a large hit, a card number with a long list

I made a pivot table so when you have something like this you can switch to pivot table view and immediately pinpoint what you need to look at:

when you're doing a search in the search sheet you press ctrl+alt+F5

when you go to the pivot sheet to update the pivot table press ctrl+alt+F5

I'm not sure why it does not update the pivot table when ctrl+alt+F5 should update queries and pivot tables, you would have to inquire with uncle MS about that

Also when no card is selected, i set it up to search by default a card number that is

0000-0000-0000-0000

you would have to insert that record at the end of the report list in the Patients sheet

like so: