- Subscribe to RSS Feed

- Mark Discussion as New

- Mark Discussion as Read

- Pin this Discussion for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 05 2022 06:30 AM

Help please.

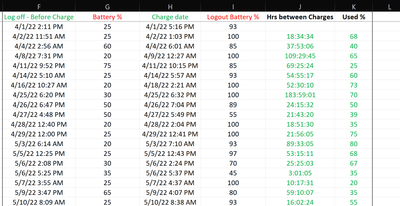

I have four columns of data with 50k lines. Col1 has an equipment identifier (23 units), cols2 & 3 contain datetime codes for equipment login/logout and col4 holds battery level percentages. The table is sorted by Equipment ID then chronologically top to bottom by login datetime. The figures in col4 descend from between 90% & 100% to “around” 20%, with multiple login/logout events between, at which point a recharge/change event occurs and the next cell (below) will be back at between 90% & 100%.

Need to determine the time period (hrs) and actual percentage used between battery change/charge events.

Can this be achieved with the use of a formula or would a macro be required?

- Labels:

-

Formulas and Functions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 05 2022 08:39 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 05 2022 08:48 AM

I do have a sample sheet but I don't know how to attach it here.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 05 2022 09:09 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 05 2022 09:16 AM

Interesting that you have a drag and drop option below the 'Hint @ links to members' note! Whereas I do not?? Any idea?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 05 2022 09:20 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 05 2022 09:26 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 05 2022 11:56 AM

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 05 2022 03:55 PM

I think this might work for you. Adjustments can be made as needed.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 06 2022 01:26 AM

Thank you for this but if it were only that easy, I think even I could have done it. I didn't explain my requirement very well I don't think. Take a look at the attached; it will describe my problem.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 06 2022 12:30 PM

SolutionThis was a fascinating project because MAP was bonking out (I7 processor, 32 GB RAM) with this large data set. I had used MAP to identify the rows where the calculations would run and to help with the subtraction. MAP/INDEX/ROW seems to have limits. I did not try this with insider functions.

I went with simpler dynamic arrays. Ultimately, this data set has to be paired down to perform the calculations.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 08 2022 10:03 AM

Hello Patrick - I've just spent the day working out what you did :)

I have reduced my data down to a month's worth - it was originally an entire quarter. I then made a slight mod because we have an anomaly in our data retrieval system that could mean it is up to 30% out. We just live with it. Other than that it worked very well - Thank you very much - I would never had got to that point on my own.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Accepted Solutions

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Aug 06 2022 12:30 PM

SolutionThis was a fascinating project because MAP was bonking out (I7 processor, 32 GB RAM) with this large data set. I had used MAP to identify the rows where the calculations would run and to help with the subtraction. MAP/INDEX/ROW seems to have limits. I did not try this with insider functions.

I went with simpler dynamic arrays. Ultimately, this data set has to be paired down to perform the calculations.