- Home

- Education Sector

- Educator Developer Blog

- Access advancements in AI with OpenAI GPT3 within the Power Platform

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

You may know everything there is to know about what everyone is talking about on OpenAI, Azure OpenAI, ChatGPT etc. Or you would like to just understand what all these terms mean and whether you can use them in any of your daily tasks/ projects. If the latter describes you, then this blog is for you to get a brief introduction to these advancements in the field of AI and how you can leverage them using the least engineering qualifications.

What is worth understanding; -

-

Is OpenAI the same as Azure OpenAI?

No. OpenAI is an organization focused on developing the next generation of AI-powered technology, with the most common being powerful models performing a wide range of diverse sets of tasks. The models are available for developers to build on and integrate in their systems for increased productivity, automation, creativity, etc.

Azure OpenAI, on the other hand, is a service on Microsoft Azure that provides access to these powerful OpenAI models with an additional security layer. This extended full security capabilities give developers confidence in this technology with a guarantee of using principles for responsible AI. Azure OpenAI also co-develops APIs with extended language processing functionality using Microsoft cognitive services for language.

-

Tell me more about these models

GPT Series (GPT 2, GPT 3, GPT 3.5) – Models in this family can understand natural language, and with a model that understands your natural language, it becomes a lot easier to bring your creativity to life in a short amount of time.

Then, what is the difference between GPT–3 and ChatGPT?

This question introduces us to a concept called ‘Fine-tuning’ which is a type of transfer learning, where an existing pre-trained model is slightly tweaked to perform a task slightly different from its primary task. So, ChatGPT exists as a fine-tune of GPT-3 to imitate a conversation/ chat scenario

Codex Series – Models in this family not only understand natural language, but also translate it to code. It might not be easy to comprehend exactly the extent of this capability or wrap our head around how such advances in AI can help you in your day-to-day activities, so let me bring this message close to what you use every single day. GitHub Copilot, a tool that is a real-world implementation of codex's ability to understand and predict code snippets in real time.

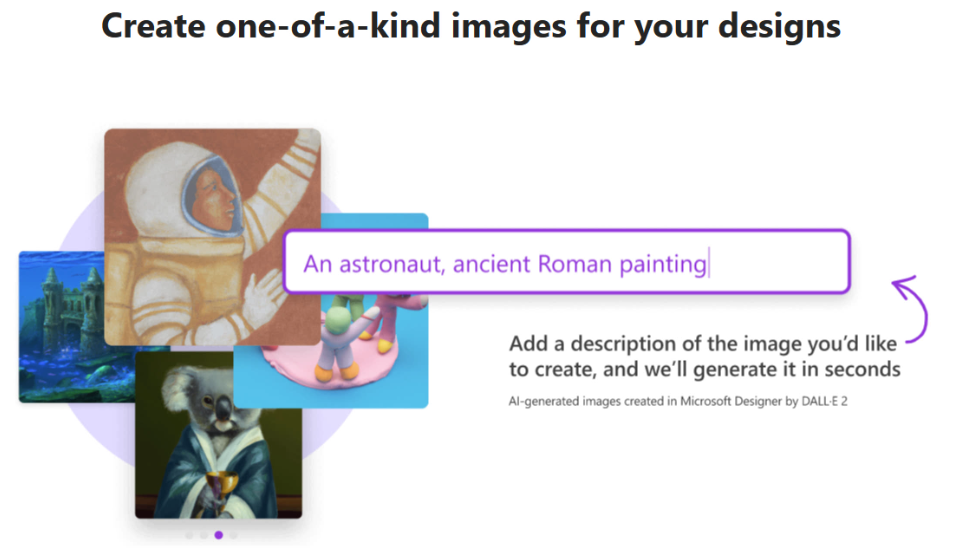

For designers, or anyone working with images, DALLE can create new images or edit original image files. Again, to bring this concept home, let’s check out a real-world implementation. Professional designers are likely to have interacted with Microsoft Designer which uses DALLE to create unique, AI generated high-quality images for various uses i.e.. Social media posts, graphics, invitation, among others.

What’s more exciting about these advances in the field of AI is that they are not just being leveraged by the largest companies, but they are also being used in Education by both students and educators to be more productive and efficient in class

3. Must I have an extensive programming background to utilize these services?

No. Today, these powerful models are accessible directly from the Power Platform, Microsoft’s Low Code Development environment that allows ‘Anyone’ to build Apps, websites, workflows, chat bots, websites using Low/No Code Tooling and with little or no coding knowledge.

In fact, I use my 'Hello Today App', built on the Power Platform, on my mobile every day to get a summary of Twitter trends for insights on the kind of day I'm expecting at work before I get to my office and start off.

Check out how it works:

Steps:

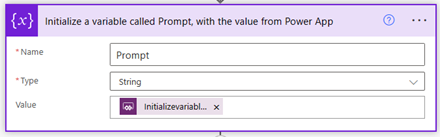

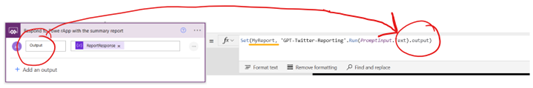

A. Build a Power App to pass in the prompt (PromptInput.Text), to the flow

B. Create a flow that will receive the prompt value and be triggered to make a call to OpenAI using the OpenAI Custom connector

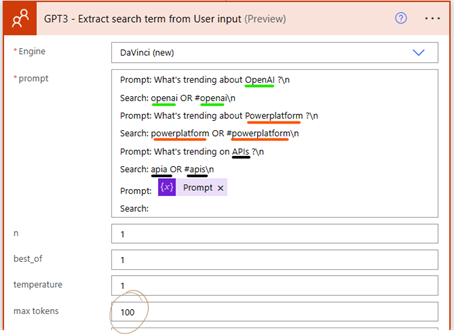

C. GPT which is using ‘In-context’ learning, where it uses your prompts to try and predict the next probable text, receives the prompt from our App and uses it to extract the search term ‘’

Note: Statements sent over to OpenAI are broken down into characters and the number of characters is what we refer to as tokens. If you surpass the given token limit, your response will get cut off to fit the supported number of tokens.

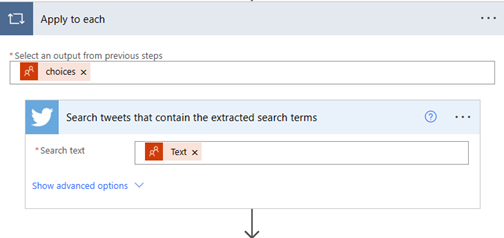

D. The next step would be a loop that:

I) Searches for tweets that contain the search term extracted from the previous step.

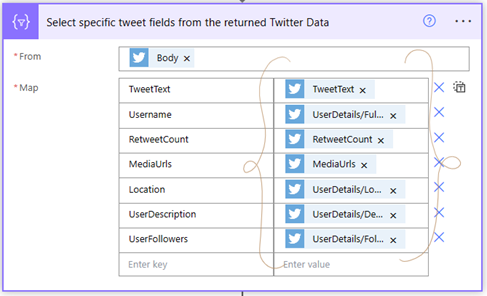

II) Since we do not want to use all the data passed from the twitter connector, use the ‘Select’ operation to map only the fields that will be used in subsequent steps.

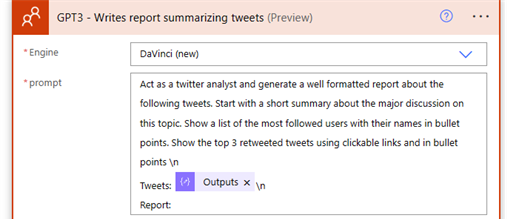

III) Since GPT understands natural language, I give instructions for it to summarize the twitter response in my desired format for my twitter report. - This is where the magic happens

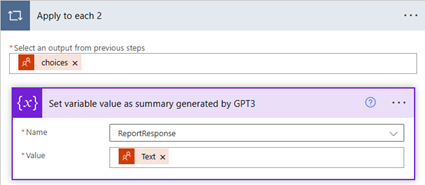

IV) Store the reported generated by GPT in a variable.

V) Pass the response ‘output’ back to Power Apps, which is stored in a variable called ‘MyReport’ and can be used throughout the App.

Chams Sallouh, an MBA Lecturer conducted a free training on GPT, which you can watch to learn how you can build your own flow.

Additional resources for your reading

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.