- Subscribe to RSS Feed

- Mark Discussion as New

- Mark Discussion as Read

- Pin this Discussion for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jul 23 2019 02:15 AM

Hello,

I believe I have found a bug in the USQL extractors.text and wanted to check if anyone else is facing a similar issue.

FYI - This was running fine before 16th July. It has run fine for > 8 months. The bug appears to have got introduced since 17th July.

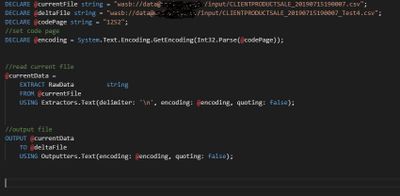

The process is a simple script (to reproduce the issue) with 6 lines of code (attached image).

Input file should be a fie of around > 8.3 GB in size containing millions of record. My file is 8.3 GB and contains 37.5 mil records.

When the process is run, the very first step of the Job Graph (that shows how many records are read) shows it has read 38.8 mil records instead of 37.5 mil records.

After checking the output file, I found that the process had read only the first 4GB of the input file, doubled the records up (in some cases duplicated 3 times over) in the output by creating duplicates.

I have raised this with Microsoft but they are not acknowledging the problem yet (It's been 5 days). I just wanted to know if others are seeing similar issue.

Regards

Lohith

- Labels:

-

Azure