- Home

- Azure Data

- Azure Synapse Analytics Blog

- Add / Manage libraries in Spark Pool After the Deployment

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

The Official Document provide the guidance to add / manage libraries during the deployment in Apache Spark pool.

But in this article, we are going to discuss the steps, how the versions can be upgraded after the deployment of the Apache Spark Pool.

- To list the current library version, you can use below code -

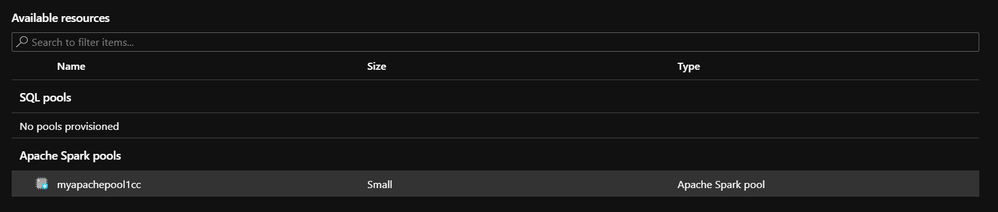

To change / upgrade the library values, click on the spark pool on Azure Synapse Analytics Workspace

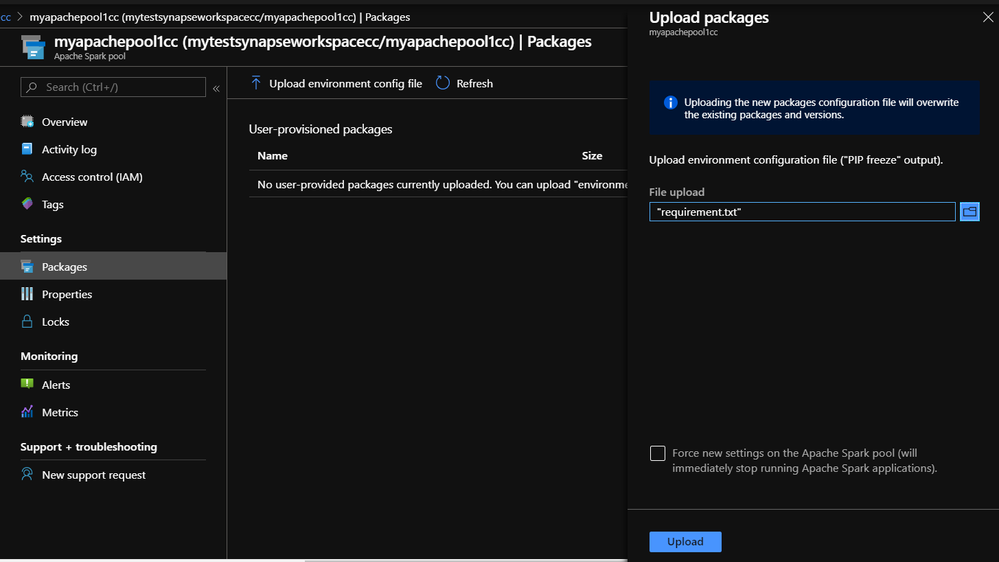

Navigate to "Packages" & you can find if there are any configurations files already uploaded.

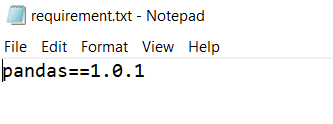

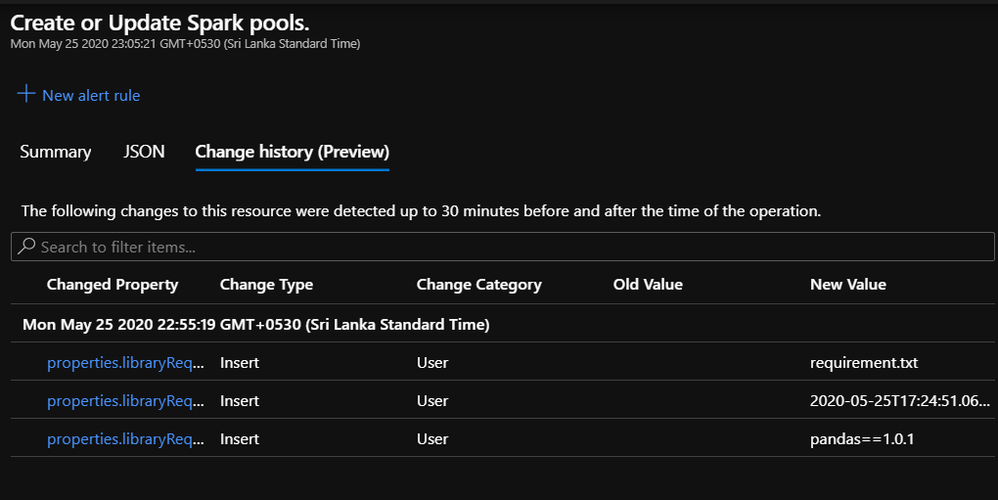

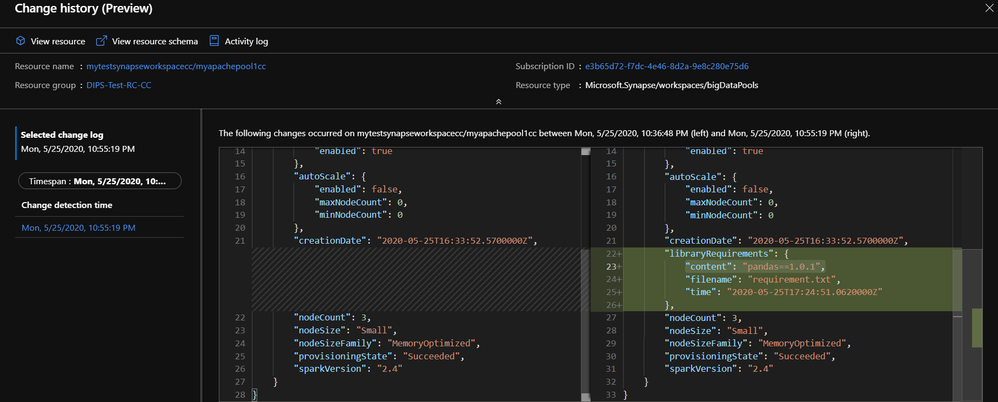

Upload the "requirement.txt" file (in this example, we will be upgrading only "pandas" version)

Default version (pandas 0.23.4)

By checking the box "Force new settings on the Apache Spark pool (will immediately stop running Apache Spark applications)", the configurations will apply to the spark pool immediately by stop running current applications.

Confirm if the file is uploaded -

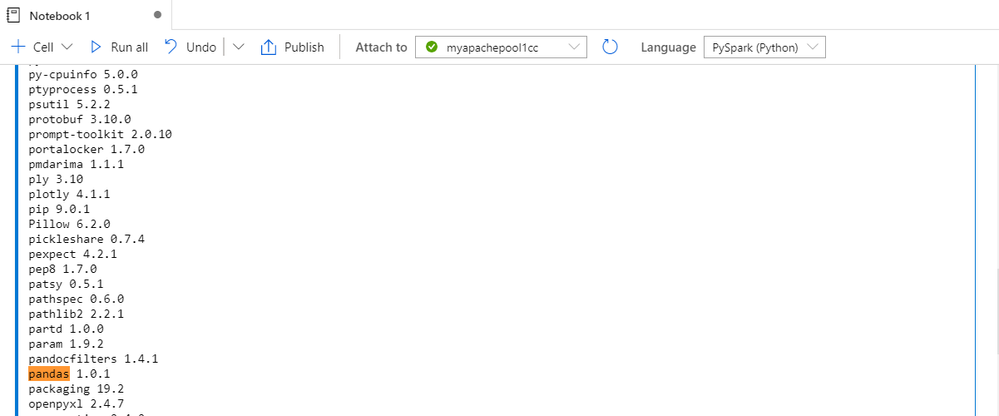

Once the upload is completed, run below code in spark pool to verify the versions -

Version Upgraded to "pandas 1.0.1" successfully -

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.