- Home

- Azure Data

- Azure Data Factory

- Re: ADF - data connect from blob to Azure SQL

ADF - data connect from blob to Azure SQL

- Subscribe to RSS Feed

- Mark Discussion as New

- Mark Discussion as Read

- Pin this Discussion for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dec 24 2020 04:39 AM

Hi All,

I have a scenario, I have a multiple excel files (4 files) in storage blob and need to upload in SQL in 4 different table (I have 4 tables for staging and 4 tables for master table) . I have created the stored procedure in SQL for those 4 files.

Can anyone help me with the ADF process to upload automatically on regular basis.

Thanks

- Labels:

-

Azure Data Integration

-

Copy Activity

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jan 02 2021 08:39 AM

@Shruthi96 I'm supposing the files contain records and you would like to insert records in SQL, not the files themselves.

In this case, a simple COPY activity will do the work and you can create a trigger for each new file created in blob storage.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jan 04 2021 04:33 AM

@DennesTorres , Yes I am looking through parametrizing the data. I dont want to create dataset for each file for source and sink file.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jan 04 2021 07:35 AM

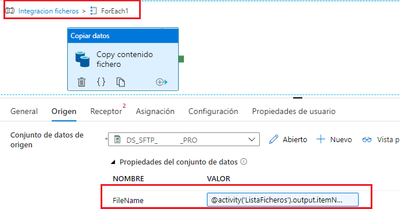

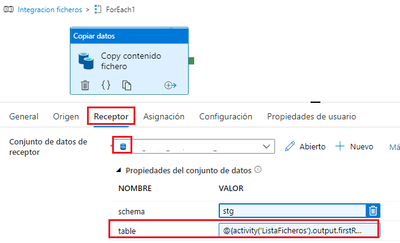

@Shruthi96 You can create parameters in a dataset. In this case the parameter could be the filename/table name

You can create a table in SQL to hold the file name/table name relation. Than you use a lookup activity to read this data, foreach activity to repeat the copy activity for each file and fill the dataset parameters.

This requires your files to follow some pattern. If they are completely different from each other and from the tables this may not work. However, there are only 4 files, right? It's not that difficult to make 8 datasets.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Jan 10 2021 06:15 AM

@DennesTorres Thank you, i think this solution will work. Will try and reply you thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Mar 03 2021 11:46 AM

hola,

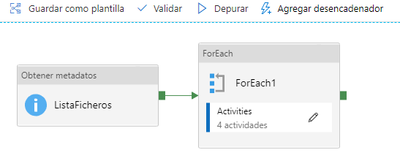

aparte de parametrizar data set y cargar todos los archivos con 1 pipeline: primero leer metadatos de la carpeta que contiene los ficheros y luego pasar los nombres del ficheros por ForEach que tiene "Copy data" por dentro.

Un saludo!