- Home

- Artificial Intelligence and Machine Learning

- AI - Azure AI services Blog

- Custom video search with Azure Video Analyzer for Media, Machine Learning and Cognitive Search

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

Authored by: Alex Hocking and Mason Cusack, Applied Data Scientists and Karol Zak and Shane Peckham, Software Engineers in the ML Platform team in Microsoft's Commercial Software Engineering organization. @shanepeckham

Azure Video Analyzer for Media (AVAM) is an Azure service designed to extract deep insights from video and audio files, including items such as transcripts, identifying faces and people, recognizing brands and image captioning amongst others. The output of Azure Video Analyzer for Media can then be indexed by Azure Cognitive Search (ACS) to provide a rich search experience to allow users to quickly find items of interest within the videos.

The above scenario works well but what if customers want to search on items of interest that are not extracted by Azure Video Analyzer for Media. Or what if they want to search on custom terms that are not found in the extracted insights? This solution will

enable exactly these scenarios by using an Azure Machine Learning (AML) custom machine learning model trained using the new Data Labelling AutoML Vision solution.

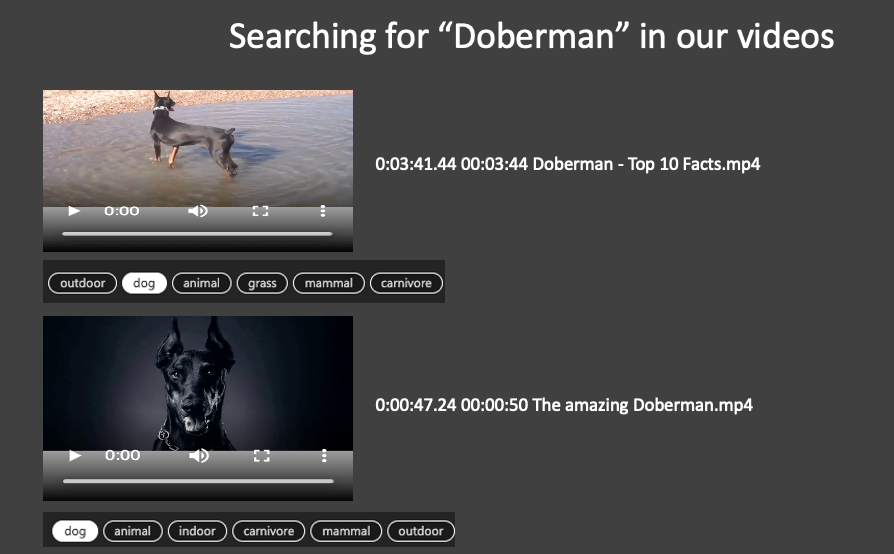

Figure 1 – Search results showing snippets of video scenes that match the query "Doberman"

Solution Overview

The following describes the high-level process for this solution:

- Using the Data Labelling functionality of AML, label images that are to be searched for. Once enough images have been classified across the AutoML process, AML will train the best model for the data.

- The Orchestrator Logic App will poll storage for new media files and when a new file is uploaded, it will then run the Indexer Logic App

- The Indexer Logic App will invoke the AVAM process to extract the insights and once completed, invokes a Parser WebApp to extract intervals and the KeyFrames identified in the intervals and restructures the AVAM output. It will then run the Classifier Logic App

- The Classifier Logic App will take the restructured AVAM output and download the KeyFrame thumbnails for the video being processed. Each KeyFrame thumbnail will be sent to the Classifier WebApp running the AML model and predict the label for the image. This will then be added to the AVAM Output.

- The AVAM Output will be uploaded to blob storage which is then used as a Data Source for ACS. The ACS indexer will then run and populate the index, ready for querying.

Figure 2 – High-Level flow of the solution

Next steps

The full code and a step-by-step guide to deploy the results can be found here for building a custom video search experience using Azure Video Indexer, Azure Machine Learning and Azure Cognitive Search. Need to be able to search your video for custom objects using your own custom terminology? Now you have a ready-made solution for that, go ahead and give it a try!

Have questions or feedback? We would love to hear from you! Use our UserVoice page to help us prioritize features or use

Azure Video Analyzer for Media's Stackoverflow page for any questions you have around Azure Video Analyzer for Media.

.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.