- Home

- Azure

- Azure High Performance Computing (HPC) Blog

- Tuning BeeGFS and BeeOND on Azure for specific I/O patterns

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

BeeGFS and BeeGFS On Demand (BeeOND) are popular parallel file systems used to handle the I/O requirements for many high performance computing (HPC) workloads. Both run well on Azure, and both can harness the performance of multiple solid state drives (SSDs) to provide high aggregate I/O performance. However, the default configuration settings are not optimal for all I/O patterns. This post provides some tuning suggestions to improve BeeGFSand BeeOND performance for a number of specific I/O patterns.

The following tuning options are explored and examples of how they are tuned for different I/O patterns are discussed:

- Number of managed disk or number of local NVMe SSDs per VM.

- BeeGFS chunk size and number of targets

- Number of metadata servers.

Deploying BeeGFS and BeeOND

The azurehpc github repository contains scripts to automatically deploy BeeGFS and BeeOND parallel file systems on Azure. (See the azurehpc/examples directory.)

git clone git@github.com:Azure/azurehpc.git

How and where you deploy BeeGFS in relation to the compute node affects latency, so make sure they are in close proxmity to one another. Follow these pointers:

- If you are using a Virtual Machine Scale Set to deploy BeeGFS or a compute cluster, make sure that singleplacementgroup is set to true.

- If you are using managed disks for BeeGFS, set the vm tag to Performance Optimization, which ensures that the managed disks are close to the VMs.

- Include the BeeGFS cluster and the compute nodes in the same proximity placement group. This guarantees that all resources in the group are in the same datacenter.

Disk layout considerations

When deploying a BeeGFS or BeeOND parallel file system on Azure using managed disks for storage, the number and type of disks need to be carefully considered. The VM disks throughput and IOPS limits dictate the maximum amount of I/O that each VM in the parallel file system can support. Azure managed disks have built-in redundancy. At a minimum, locally redundant storage (LRS) keeps three copies of data, and so RAID 0 striping is enough.

In general, for large throughput, striping more slower disks than fewer faster disks is preferred. As Figure 1 shows, eight P20 disks gives better performance than four P30 disks, while eight P30 disks does not improve performance because of the VM (E32s_v3) disk throughput throttling limit.

Fig. 1. Striping a larger number of slower disks (e.g. 8xP20) gives better throughput than striping fewer faster disks (e.g. 3xP30). Striping more P30 disks (e.g. 8xP30) does not improve the performance because of disk throughput throttling limits.

In the case of local NVMe SSDs (such as Lsv2), BeeGFS storage targets that have a single NVMe disk give the best aggregate throughput and IOPS. For very large BeeGFS parallel file systems, striping more NVMe disks per storage target can simplify the management and deployment.

Note that the performance of a BeeGFS parallel file system that uses ephemeral NVMe disks (such as LSv2) is limited by the VM network throttling limits. In other words, I/O performance is network-bound.

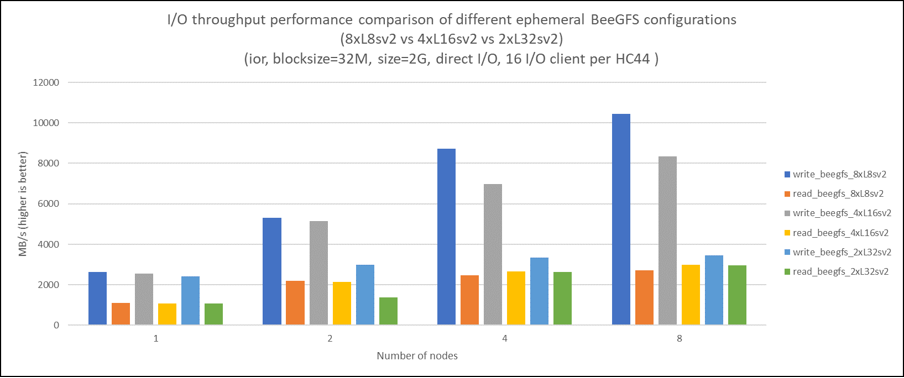

Fig. 2. Performance of reads and writes varies by number of NVMes per VM. The best aggregate throughput for BeeGFS was using L8sv2.

Fig. 3. A single NVMe per VM may gives better aggregate I0PS performance.

Chunk size and number of targets

BeeGFS and BeeOND parallel file systems consist of several storage targets. Typically, each VM is a storage target. It can use managed disks or local NVMe SSDs, and it can be striped with RAID 0. The chunk size is the amount of data sent to each target, to be processed in parallel. The default chunk size is 512 K, and the default number of storage targets is four as the following script shows:

beegfs-ctl –getentryinfo /beegfs

EntryID: root

Metadata buddy group: 1

Current primary metadata node: cgbeegfsserver-4 [ID: 1]

Stripe pattern details:

+ Type: RAID0

+ Chunksize: 512K

+ Number of storage targets: desired: 4

+ Storage Pool: 1 (Default)

Fortunately, you can change the chunk size and number of storage targets for a directory. This means that you can tune each directory for a different I/O pattern by adjusting the chunk size and number of targets.

For example, to set the chunk size to 1 MB and number of storage targets to 8:

beegfs-ctl --setpattern --chunksize=1m --numtargets=8 /beegfs/chunksize_1m_4t

A rough formula for setting the optimal chunksize is:

Chunksize = I/O Blocksize / Number of targets

Number of metadata servers

If you set up BeeGFS or BeeOND parallel file systems that do a lot of metadata operations (such as creating files, deleting files, stat operations on files, and checking file attributes and permissions), you must choose the right number and type of metadata servers to get the best performance. By default, BeeOND configures only one metadata server no matter how many nodes are in your BeeOND parallel file system. Fortunately, you can change the default number of metadata servers used in BeeOND.

To increase the number of metadata servers in a BeeOND parallel file system to 4:

beeond start -P -m 4 -n hostfile -d /mnt/resource/BeeOND -c /mnt/BeeOND

Large Shared Parallel I/O

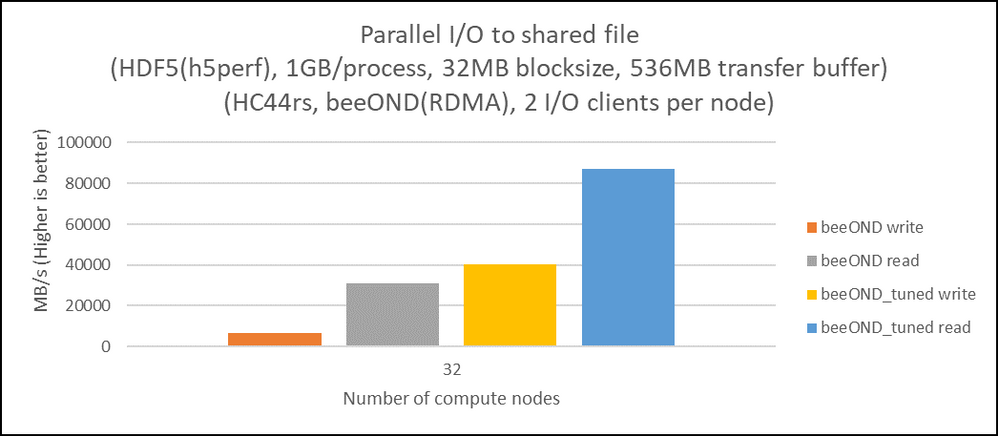

The shared parallel I/O format is a common in HPC in which all parallel processes write/read to/from the same file (N-1 I/O). Examples of this format are MPIO, HDF5, and netcdf. The default settings in BeeGFS and BeeOND are not optimal for this I/O pattern, and you can improve performance significantly by increasing the number of storage targets.

It is also important to be aware that the number of targets used for shared parallel I/O will limit the size of the file.

Fig. 4. By tuning BeeOND (increasing the number of storage targets), we get significant improvements in I/O performance for HDF5 (shared parallel I/O format).

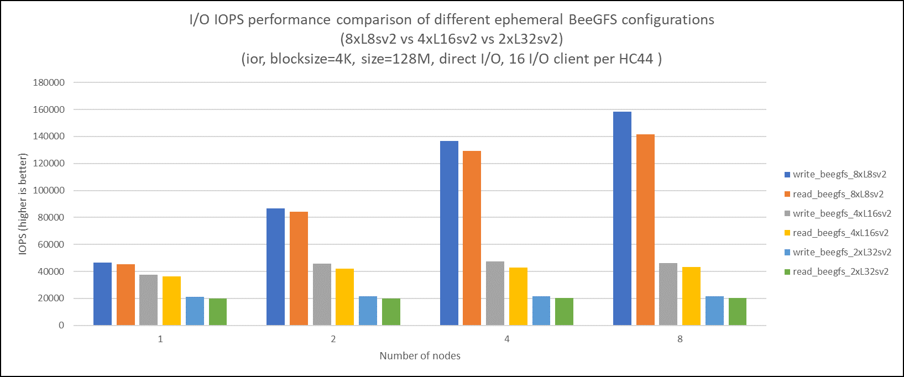

Small I/O (high IOPS)

BeeGFS and BeeOND are primarily designed for large throughput I/O, but there are times when the I/O pattern of interest is high IOPS. The default configuration is not ideal for this I/O pattern, but some tuning modification can improve the performance. To improve IOPS, it is usually best to reduce the chunk size to the minimum of 64 K (assuming the I/O blocksize is less than 64 K) and reduce the number of targets to one.

Fig. 5. BeeGFS and BeeOND IOPS performance is improved by reducing the chunk size to 64 K and setting the number of storage targets to one.

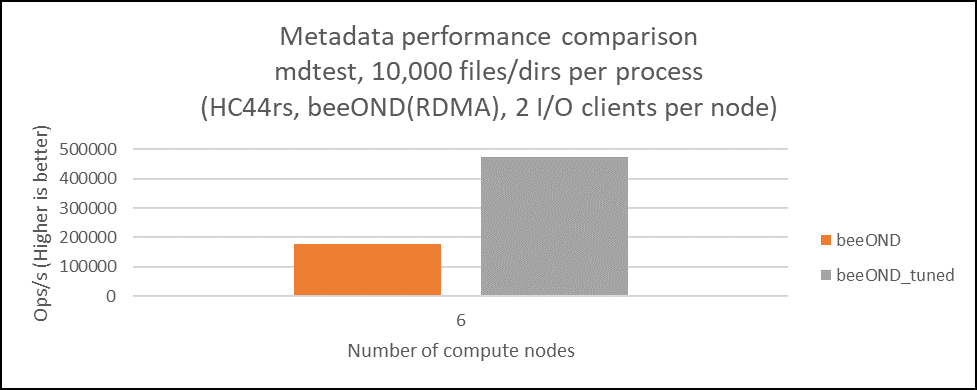

Metadata performance

The number and type of metadata servers impacts the performance for metadata-sensitive I/O. For example, BeeOND has only one metadata server by default, so adding more metadata servers can significantly improve performance.

Fig. 6. BeeOND metadata performance can be significantly improved by increasing the number of metadata servers.

Summary

With HPC on Azure, don’t just accept the configuration defaults in BeeGFS or BeeOND. Performance tuning makes a big difference in the I/O throughput. For more information about tuning, see Tips and Recommendations in the BeeGFS documentation.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.